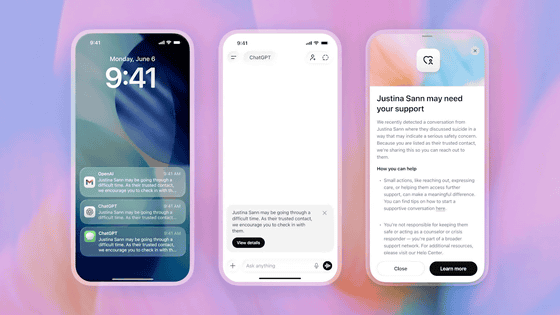

ChatGPT will add a feature that allows users to contact their parents or guardians when they discuss self-harm.

OpenAI's chat AI, ChatGPT, has added a ' Trusted Contact' feature. This feature means that if OpenAI's automated system and trained reviewers determine that an adult user may have conversed with ChatGPT in a way that expresses serious safety concerns about self-harm, a notification will be sent to the 'Trusted Contact' designated by that user.

Introducing Trusted Contact in ChatGPT | OpenAI

ChatGPT's new ' Trusted Contacts ' feature allows adult users to designate friends, family, caregivers, and others as trusted contacts. If a user may have discussed self-harm with ChatGPT and this raises serious safety concerns, OpenAI's automated system and trained reviewers will notify the registered contacts of the situation.

The 'Trusted Contacts' feature is designed to provide additional support by helping users connect with trusted individuals during times of crisis, in addition to the regional helplines already available on ChatGPT.

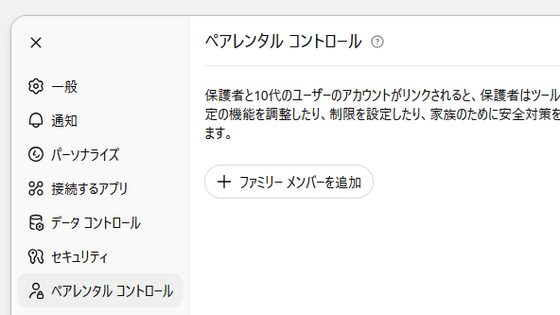

Trusted Contacts is an enhanced version of ChatGPT's parental control and safety notification features . For teenage user accounts linked to parental control features, even if a Trusted Contact is not registered, parents or guardians will receive an alert if the user engages in risky interactions with ChatGPT.

Details of 'trusted contacts' are as follows:

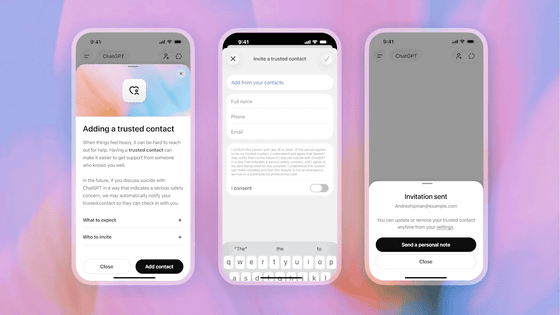

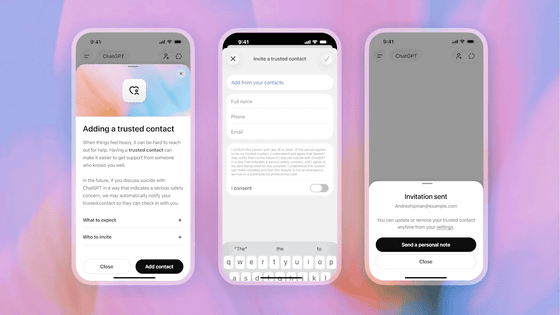

Users can add one adult (18 years or older worldwide, 19 years or older in Korea) as a trusted contact through ChatGPT settings.

Trusted contacts will receive an invitation explaining their role, and they must accept the invitation within one week to activate the feature. If a trusted contact declines the invitation, the user can add another adult.

If OpenAI's automated monitoring system detects that a user may be conversing with ChatGPT about self-harm in a way that indicates serious safety concerns, ChatGPT will notify the user that OpenAI may notify the user's trusted contacts. In addition, it will encourage the user to contact their trusted contacts with suggestions for initiating a conversation.

A small, specially trained team then reviews the situation. If the reviewers determine that the conversation may indicate serious safety concerns, ChatGPT will send a brief notification to trusted contacts via email, text message, or (if they have a ChatGPT account) in-app notification.

This notice is intentionally limited in scope to explain the general reasons why self-harm may have been a concern and to encourage you to contact a trusted contact. To protect your privacy, it does not include chat details or records. The notice also includes

Users can delete or edit trusted contacts at any time from the settings screen. In addition, trusted contacts can delete themselves at any time from the Help Center.

Furthermore, OpenAI developed its 'Trusted Contacts' feature under the guidance of clinicians, researchers, and organizations specializing in mental health and suicide prevention. This initiative is based on the expertise of a global network of over 260 physicians in 60 countries and an expert council on well-being and AI . It also appears to be working closely with external organizations, including the American Psychological Association.

OpenAI explains, 'The trusted contact feature is part of OpenAI's broader commitment to building AI systems that help people in difficult situations. We will continue to work with clinicians, researchers, and policymakers to improve how AI systems respond when people may be experiencing distress. Our goal is to help AI systems connect people to the real-world care, relationships, and resources they need most, rather than having them exist in isolation.'

Related Posts:

in AI, Posted by logu_ii