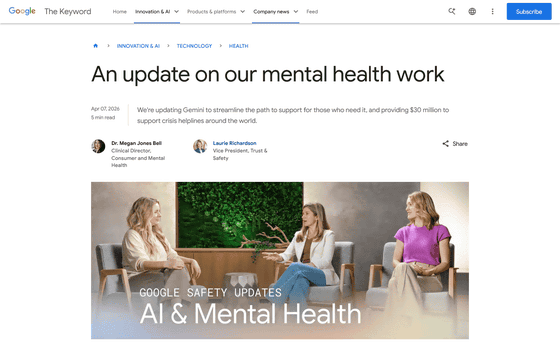

Google has released details on updates to Gemini's mental health safety measures.

AI is increasingly being used as a source of emotional support, such as treating chatbots like friends or lovers for personal conversations or

Google's mental health work and support for organizations

https://blog.google/innovation-and-ai/technology/health/mental-health-updates/

On April 7, 2026, Google published an update on its mental health initiatives in a blog post.

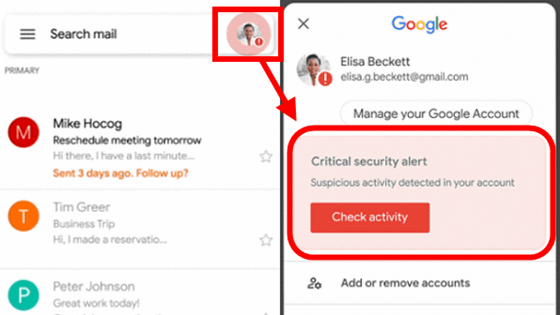

Google's Gemini update can be broadly divided into four points. The first is 'improved access to crisis assistance.' If a conversation suggests that a user may need information about mental health, Gemini will display a 'Support is available' module that provides a more effective and faster connection to care, developed in collaboration with clinical professionals. It also introduces a 'one-touch interface' that allows for instant connection to a crisis hotline when a potential crisis related to suicide or self-harm is detected. Secondly, Google announced that it will provide a total of $30 million (approximately 4.7 billion yen) in funding to support hotlines around the world over the next three years.

Thirdly, Gemini helps to respond appropriately to situations involving serious mental illness. Google's clinical, engineering, and safety teams are training Gemini to design better coping mechanisms for impulses such as self-harm and to avoid affirming false beliefs, while prioritizing safety and human connection. Google states, 'Gemini is a useful tool for learning and gathering information, but it is not a substitute for professional clinical care, therapy, or crisis response support for people who need it. Therefore, if we recognize the possibility of serious mental illness in a conversation, we are able to guide them to the appropriate support.'

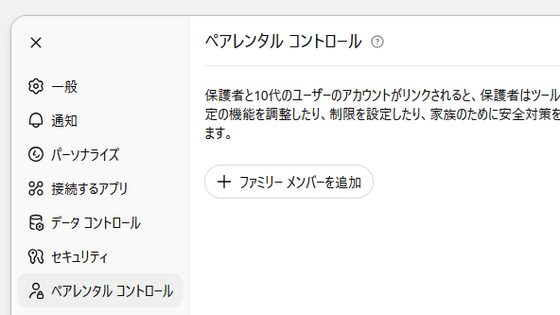

Fourthly, Gemini also has features to protect young users. These include a 'personality protection feature' that prevents Gemini from acting as if it were a friend of the user or claiming to be human, as well as measures to avoid language that pretends to be intimate or expresses desires, and measures to prevent the promotion of bullying and other forms of harassment.

Google explained, 'These updates are part of our long-term commitment to helping people by leveraging Google's cutting-edge technology and the expertise of clinicians and safety professionals. We are excited about the potential of these tools to provide more accessible, compassionate, and effective support.'

Amy Carrierblair, who is in charge of responsible AI product strategy for children and families at Google, said in a video about AI and mental health updates, 'Regulations that simply allow AI chatbots to refuse to answer or end sessions when there is inappropriate conversation (including suicide and harassment) have drawbacks, especially when dealing with children.' Megan Jones Bell, clinical director of consumer health, added, 'What really helps alleviate distress and get the support you need is not to close the door on them. We should respond quickly to requests for help, but we need to make it clear that the support they need is not something that can be provided by Google products. That is better than simply saying, 'We cannot answer that question.''

AI and Mental Health | Google Safety Updates - YouTube

Related Posts: