The 'Qwen3.6-27B,' a system running on a local PC with performance approaching that of the Claude Opus 4.5, has been released and is available for anyone to download.

Qwen (Tongyi Lab), an AI research team at the Chinese AI company Alibaba, has released ' Qwen3.6-27B ,' a multimodal AI model with 27 billion parameters. It is licensed under the open Apache License 2.0 and can be used commercially.

Qwen3.6-27B: Flagship-Level Coding in a 27B Dense Model

1/4 Compact Size, Flagship Coding. Qwen3.6-27B is officially open source.

— Tongyi Lab (@Ali_TongyiLab) April 22, 2026

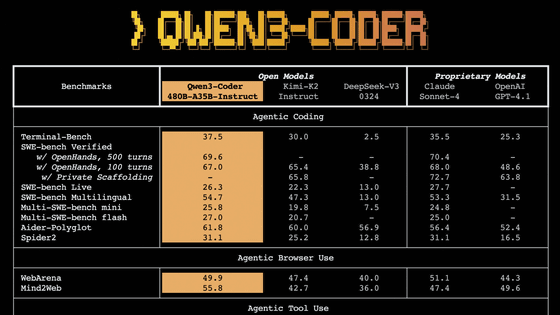

Qwen3.6-27B delivers flagship-level agentic coding performance, surpassing the previous-generation open-source flagship Qwen3.5-397B-A17B across all major coding benchmarks.

Core Capabilities:

• Agentic… pic.twitter.com/YNm8oLtLYb

The Qwen team announced the Qwen 3.6 series on April 2, 2026, releasing 'Qwen 3.6-Plus' as the flagship model. The announcement for Qwen 3.6-Plus stated that 'smaller versions will be released under an open-source license in the future,' and this 'Qwen 3.6-27B' is a smaller version of Qwen 3.6-Plus.

Qwen3.6-Plus Released, Featuring Agent Functionality for Autonomous Task Execution - GIGAZINE

Qwen also released Qwen3.6-35B-A3B on April 15, 2026. Qwen3.6-35B-A3B was characterized by its lightweight operation with only 3 billion active parameters while still ensuring high performance.

A Chinese AI called 'Qwen3.6-35B-A3B,' which is more powerful than Gemma4, has been released as an open model - GIGAZINE

The newly released Qwen3.6-27B boasts 27 billion parameters and supports both thinking and non-thinking modes. All models in the Qwen3.6 series are multimodal, supporting images and videos, and Qwen3.6-27B is no exception. It is said to possess top-level agent coding capabilities, making it a practical and widely deployable model.

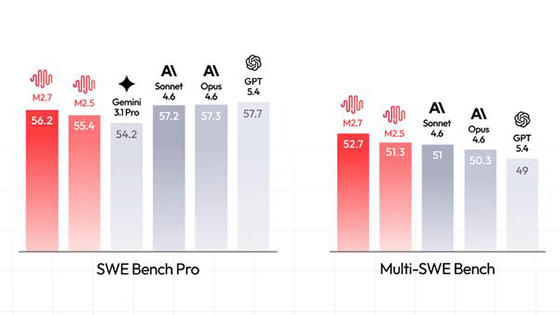

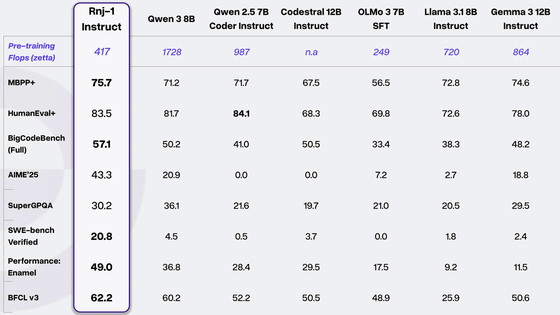

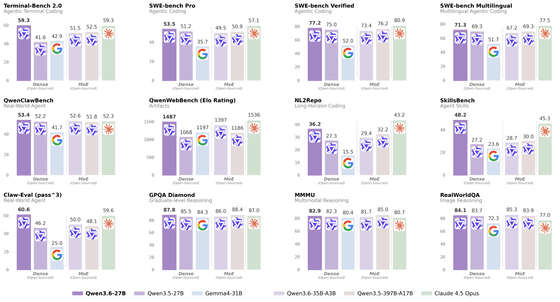

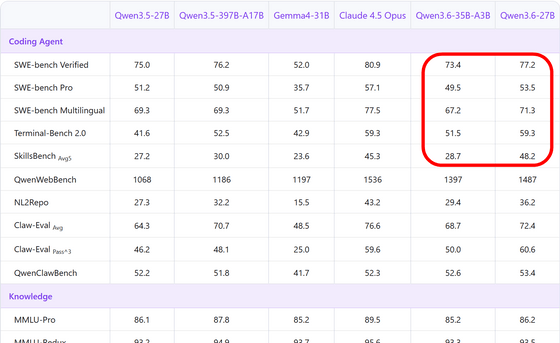

The following are benchmark results released by Qwen, showing that it surpasses the previous model Qwen3.5-27B and the open model ' Gemma4-31B ' released by Google on April 2, 2026, in all 12 benchmarks, and even surpasses Anthropic's top-of-the-line model from two generations ago, the ' Claude 4.5 Opus, ' in some scores.

Furthermore, it achieved scores that surpassed the Qwen3.5-397B-A17B model, which has a total of 397 billion parameters, in major coding benchmarks such as 'SWE-bench Verified,' 'SWE-bench Pro,' 'Terminal-Bench 2.0,' and 'SkillBench,' demonstrating its strength in agent coding.

Qwen3.6-27B's weight data is publicly available on Hugging Face and ModalScope, and can be downloaded and self-hosted. It is also planned to be available on Alibaba Cloud Model Studio via API in the future. It is said to seamlessly integrate with popular third-party coding assistants such as OpenClaw, Claude Code, and Qwen Code, streamlining development workflows and providing a superior coding experience. An FP8 quantized version has also been released by the development team.

Qwen/Qwen3.6-27B · Hugging Face

https://huggingface.co/Qwen/Qwen3.6-27B

Qwen/Qwen3.6-27B-FP8 · Hugging Face

https://huggingface.co/Qwen/Qwen3.6-27B-FP8

Qwen stated, 'Qwen3.6-27B surpasses the much larger 397 billion parameter Qwen3.5-397B-A17B in terms of tasks important to developers, while being easier to deploy and operate. With the release of Qwen3.6-27B, the Qwen3.6 series now offers a comprehensive suite of models, symbolizing a generation of breakthroughs in agent coding at all scales. We look forward to seeing what will be built with these models.'

Furthermore, Simon Willison reportedly achieved very good results in the Pelican benchmark using the 4-bit quantized version of the Qwen3.6-27B.

Related Posts:

in AI, Posted by log1d_ts