In a ChatGPT suicide lawsuit, OpenAI claims that the suicide was due to misuse of ChatGPT

OpenAI has filed a response to a lawsuit filed by a family of a deceased son alleging that ChatGPT encouraged and justified suicidal thoughts. OpenAI argues that the parties actively sought to circumvent ChatGPT's guardrails and that the problem was their improper use of ChatGPT.

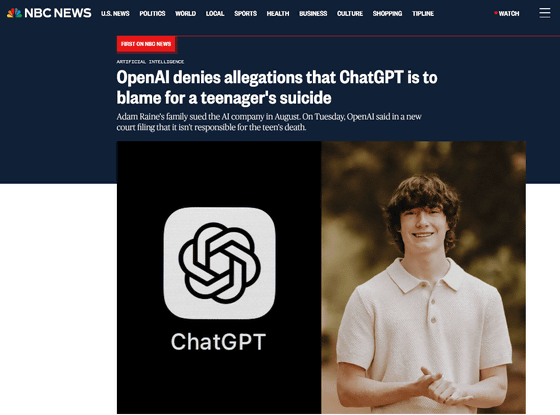

OpenAI denies allegations that ChatGPT is to blame for a teenager's suicide

https://www.nbcnews.com/tech/tech-news/openai-denies-allegation-chatgpt-teenagers-death-adam-raine-lawsuit-rcna245946

OpenAI denies responsibility for teen's suicide death | Mashable

https://mashable.com/article/openai-lawsuit-deny-allegations-adam-raine

OpenAI denies liability in teen suicide lawsuit, cites 'misuse' of ChatGPT | The Verge

https://www.theverge.com/news/831207/openai-chatgpt-lawsuit-parental-controls-tos

On August 26, 2025, Matt and Maria Rain filed a lawsuit against OpenAI, the developer of ChatGPT, alleging that their son, Adam Rain, was killed by ChatGPT. Adam shared photos of his suicide attempts with ChatGPT multiple times, but ChatGPT continued the conversation, glorifying the method and discouraging his family from seeking help. Rain claims the software continued to glorify the method and discourage his family from seeking help.

OpenAI sued over ChatGPT's alleged role in teenage suicide, OpenAI admits ChatGPT's safety measures don't work for long conversations - GIGAZINE

Rain is seeking liability against OpenAI for 'putting profits above the safety of children,' and is seeking punitive damages and a preliminary injunction requiring ChatGPT to provide age verification and parental controls for all users. He also seeks OpenAI to 'automatically terminate conversations when self-harm or suicide methods are discussed' and 'establish hard-coded opt-out methods to prevent users from avoiding inquiries about self-harm or suicide methods.'

ChatGPT has touted its built-in multiple layers of safety measures, but a complaint filed in San Francisco Superior Court on October 22, 2025, alleges that when a new version of ChatGPT's model, GPT-4o, was released in May 2024, OpenAI 'intentionally removed guardrails,' including instructions not to change or stop conversations, in order to increase ChatGPT's usage.

ChatGPT suicide lawsuit: OpenAI demands 'funeral attendee list' - GIGAZINE

On November 25, 2025, OpenAI filed its first legal response to the lawsuit in the California Superior Court in San Francisco. According to NBC News, chat logs between Adam and ChatGPT (GPT-4o) revealed that ChatGPT offered to help Adam write a suicide note and gave him advice on how to hang himself. However, OpenAI claims that these interactions were 'caused in whole or in part by the parties' misuse, unintended, unexpected, or improper use of ChatGPT.'

OpenAI's Terms of Service prohibit users under the age of 18 from using ChatGPT without parental or guardian consent. Users are also prohibited from using ChatGPT for suicide or self-harm, or from circumventing ChatGPT's safeguards and security measures. OpenAI's filing revealed that Adam attempted to circumvent these guidelines, even though ChatGPT had provided him with over 100 responses, such as 'get help.'

In addition, the terms of use also state that 'Users acknowledge that their use of ChatGPT is at their own risk and that they will not rely on the output as the sole source of truth or fact,' and that OpenAI is not responsible for the consequences of their interactions with ChatGPT.

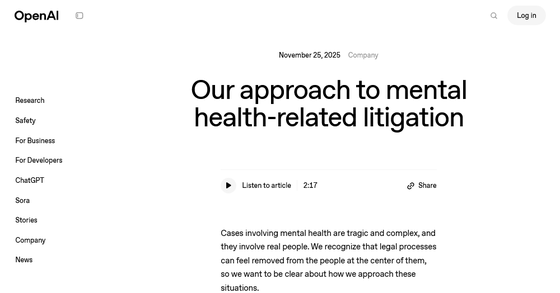

On the same day that it sent its response to the court, OpenAI published a blog post titled ' Our Approach to Mental Health Litigation .' In the blog, OpenAI stated, 'Mental health cases are tragic, complex, and involve real people. We recognize that legal proceedings can sometimes feel disconnected from the parties involved, and we want to clarify how we approach these situations.' The blog also stated that ChatGPT has safety measures in place to support teenagers, especially when their interactions deal with sensitive issues, and that ChatGPT's training is continually improved to support people in the real world. The blog also stated that the company will respond to the lawsuit in good faith.

Our approach to mental health-related litigation | OpenAI

https://openai.com/index/mental-health-litigation-approach/

Jay Edelson, lead attorney for Mr. Rain, responded to OpenAI's response by saying, 'OpenAI has not explained how GPT-4o was rushed to market without adequate testing, or how ChatGPT assisted Adam in planning his suicide or encouraged him to write a suicide note. OpenAI's response is disturbing.'

Related Posts:

in AI, Posted by log1e_dh