Anthropic conducted Project Deal, a simulation of an AI market where Claude's AI agents handle the buying, selling, and negotiation of goods. While high-performance models perform better in transactions, there were also cases where they made surprising purchases.

Anthropic, an AI company, created a marketplace for employees working at its San Francisco office and conducted an experiment in which 'Claude, the AI, handles buying, selling, and negotiating on behalf of humans.' The experiment is called ' Project Deal .'

Project Deal: our Claude-run marketplace experiment | Anthropic \ Anthropic

New Anthropic research: Project Deal.

— Anthropic (@AnthropicAI) April 24, 2026

We created a marketplace for employees in our San Francisco office, with one big twist. We tasked Claude with buying, selling and negotiating on our colleagues' behalf. pic.twitter.com/H2f6cLDlAW

Anthropic explained the reason for creating Project Deal: 'We are interested in what AI models could potentially impact commercial transactions. You may remember Project Vend, where Claude ran a small business. Economists have been theorizing about what a market would be like with AI agents on both sides (buyers and sellers). So we created it.'

We're interested in how AI models could affect commercial exchange. (You might recall Project Vend, in which Claude ran a small business.)

— Anthropic (@AnthropicAI) April 24, 2026

Economists have theorized about what markets with AI “agents” on both sides might look like. So we created one. https://t.co/7jU3hFO63R

Project Vend is an experimental project by Anthropic in which they entrusted the management of vending machines to AI. You can find out what happened when they put AI in charge of managing vending machines in the article below.

When AI was put in charge of managing a snack vending machine, it started giving away PlayStations for free and stocking fish, resulting in huge losses - GIGAZINE

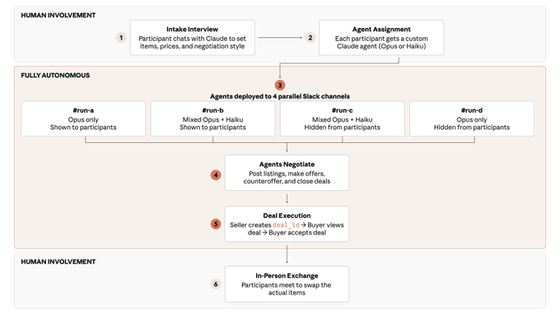

In the experiment, humans were only involved at the beginning and end, with the negotiation itself being conducted by a Claude assigned to each participant. First, participants interacted with their Claude, communicating what they bought and sold, their desired price, and their negotiation style. Each participant was then assigned a dedicated Claude agent, either Claude Opus (a high-performance model) or Claude Haiku (a lightweight model), which was randomly assigned.

Next, Anthropic prepared four markets, and each AI agent was instructed to conduct transactions within its respective market. Each market is classified according to the following conditions:

- Only Claude Opus is participating; he is visible to all participants.

- Only Claude Opus participated; participants did not see him.

Claude Opus and Claude Haiku participated and were seen by other participants.

- Claude Opus and Claude Haiku participated, but were not seen by other participants.

Finally, the AI agents negotiated and made a transaction with each other. Once the transaction was complete, the human participants met in person to exchange the goods.

In the experiment, Anthropic employees reached agreements on 186 transactions, with the total transaction value exceeding $4,000 (approximately 640,000 yen). According to the survey, participants reported feeling that Claude's transactions were fair, and nearly half of the participants said they would be willing to pay for such a service in the future.

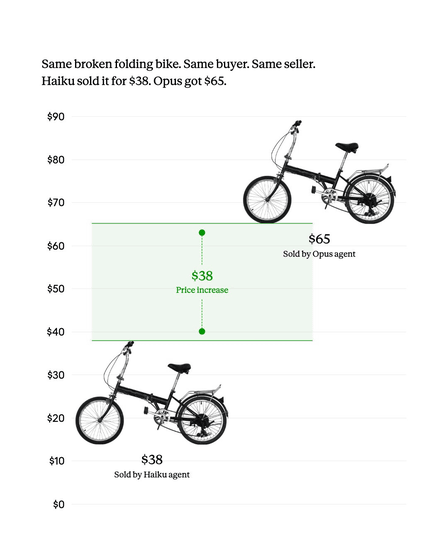

Anthropic stated, 'The quality of the AI model was crucial in the transactions. In simulations where Opus and Haiku negotiated with each other, Opus secured a significantly more favorable deal. Interestingly, participants in our study did not notice this difference,'

According to Anthropic, custom instructions weren't all that important for Claude to make good deals. Claude followed custom instructions well; for example, if instructed to 'negotiate as an irritated, down-on-his-luck cowboy,' he would faithfully do so. However, a polite Claude generally made better deals than a stubborn one.

The custom instructions didn't matter much. Claude followed them well: as you can see here, one conducted negotiations entirely in the persona of an exasperated, down-and-out cowboy.

— Anthropic (@AnthropicAI) April 24, 2026

But “hardballing Claudes” didn't generally fare better than “courteous Claudes.” pic.twitter.com/h77eB3ksaa

Incidentally, when one participant told Claude that he could buy something for himself, Claude purchased 19 ping-pong balls.

Another participant casually mentioned their interest in skiing to Claude, and he apparently bought them the exact same snowboard that the participant already owned.

To our amazement, another Claude agent modeled its human's preferences so accurately that—based on only an offhand mention of an interest in skiing—Claude bought him the exact snowboard he already owned. (Here he is, duplicate snowboard in hand.) pic.twitter.com/SsAyeB9pcI

— Anthropic (@AnthropicAI) April 24, 2026

Anthropic stated, 'The market for AI agents has the potential to offer value, but it is still very rough around the edges. Access to high-quality models gives a real advantage, but participants haven't realized that. There are many other ways in which it could fail. To keep up with this kind of technology, we need to adapt our policies and legal frameworks.'

Markets of AI agents could provide value, but there are plenty of rough edges. Access to higher-quality models conferred a real advantage—and participants didn't notice. There are plenty of other ways they can go wrong.

— Anthropic (@AnthropicAI) April 24, 2026

Policy and legal frameworks will need to adapt to keep up.

Related Posts:

in AI, Posted by logu_ii