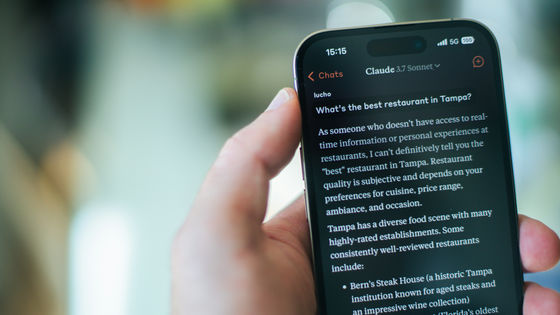

Anthropic, the developer of Claude, has signed a contract with Google and Broadcom to deploy Google's TPUs on a large scale.

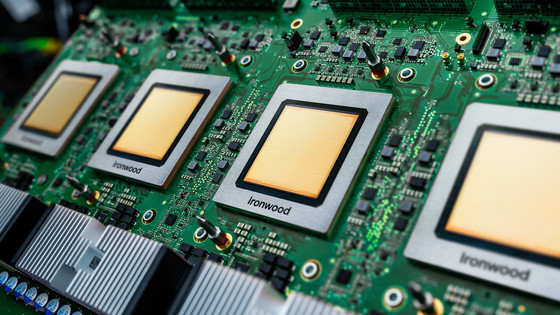

Anthropic, the developer of the AI 'Claude,' has announced that it has signed a contract with Google and semiconductor manufacturer Broadcom to secure a large quantity of Google's AI-specific processor, 'TPU.'

Anthropic expands partnership with Google and Broadcom for multiple gigawatts of next-generation compute \ Anthropic

Anthropic has announced that it has secured a contract to acquire next-generation TPUs on a 'several gigawatt scale.' While details are unclear, Anthropic will begin using TPUs on a large scale as the foundation for supporting Claude starting in 2027.

Anthropic stated, 'Our partnerships with Google and Broadcom demonstrate our continued approach to infrastructure scaling. We are building the capacity needed to support exponential growth while enabling Claude to lead the way in AI development.'

This partnership is reportedly a strengthening of an agreement Anthropic signed with Google in October 2025. At that time, Anthropic aimed to expand its use of Google Cloud, including more than one million TPUs.

Google and Anthropic officially announce cloud partnership, expanding computing power through the use of over 1 million TPUs - GIGAZINE

According to Anthropic, demand from Claude users will accelerate in 2026, and annual revenue will already be more than three times that of 2025 by April 2026. Fundraising is also going well, with the number of investors doubling from 500 in February 2026 to 1,000 in just two months.

The majority of the new infrastructure is planned to be installed within the United States. Anthropic stated, 'This partnership represents a significant expansion of our commitment, announced in November 2025, to invest $50 billion (approximately 8 trillion yen) in strengthening America's computing infrastructure.'

Claude is trained and run on a variety of AI hardware, including Google TPUs, AWS Trainium, and NVIDIA GPUs, and Anthropic explains that 'this platform diversity enables us to improve performance and fault tolerance.'

Related Posts: