DeepSeek releases AI model 'DeepSeek-Math-V2' specialized for mathematical reasoning, achieving a gold medal-level accuracy rate at the International Mathematical Olympiad

DeepSeek released DeepSeek-Math-V2 , an AI model specialized for mathematical reasoning, on November 27, 2025. DeepSeek-Math-V2 focuses on theorem proving and self-verification capabilities, and differs from conventional mathematical AI models in that it not only pursues the accuracy of answers, but also places importance on the rigor and completeness of the reasoning process.

GitHub - deepseek-ai/DeepSeek-Math-V2

deepseek-ai/DeepSeek-Math-V2 · Hugging Face

https://huggingface.co/deepseek-ai/DeepSeek-Math-V2

2025 Major Release: How Does DeepSeekMath-V2 Achieve Self-Verifying Mathematical Reasoning? Complete Technical Analysis - CurateClick

https://curateclick.com/blog/deepseekmath-v2

Previously, large-scale language models were primarily trained using 'reinforcement learning,' which rewards whether the final answer is correct. However, this method cannot detect cases where the intermediate thinking was incorrect, even if the answer happens to be correct. In particular, in advanced mathematics such as theorem proving, where there are no numerical answers and the accumulation of rigorous logic is required, there are limitations to the success of previous methods.

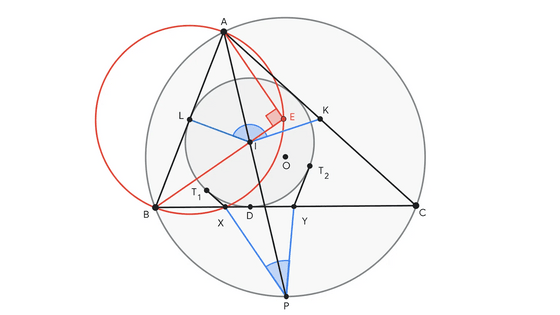

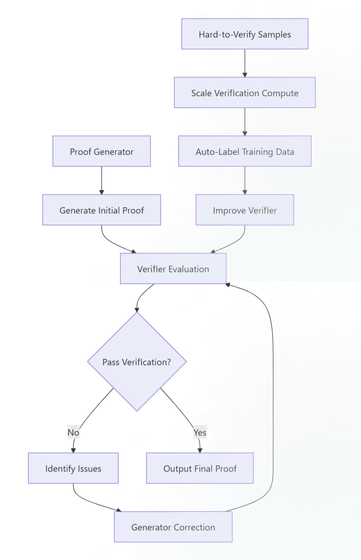

The technology adopted to solve this problem is an architecture that combines two models: a 'generator' that creates proofs and a 'verifier' that determines whether the proofs are correct.

DeepSeek-Math-V2 training is carried out in three stages. First, a verifier is trained, and then the verifier acts as a teacher to train the generator. Next, the generator receives feedback from the verifier and is trained to identify and correct errors in its own proofs. Finally, by increasing the amount of computation required for verification, the AI can automatically determine the accuracy of difficult proofs, which can then be used as new training data to further improve the verifier's capabilities, completing the cycle.

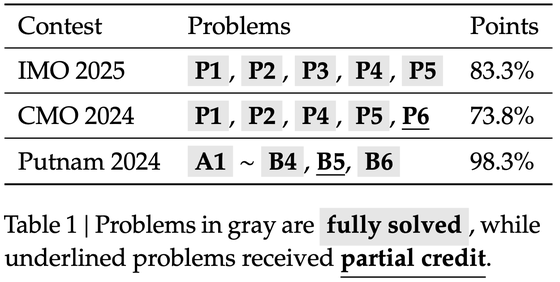

Thanks to this technological innovation, DeepSeek-Math-V2 has achieved extremely high results in global mathematical competitions, including a gold medal-level score of 83.3% at the 2025 International Mathematical Olympiad (IMO), 73.8% at the 2024 Canadian Mathematical Olympiad (CMO), and an astounding 98.3% at the 2024 Putnam Mathematics Olympiad, a US university-level competition.

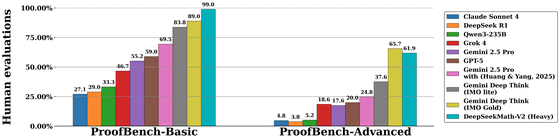

Even when compared to other companies' high-performance models, it has performed better than Gemini Deep Think and Claude Sonnet 4 in an evaluation index called ProofBench, demonstrating its strength in particularly difficult problems.

DeepSeek-Math-V2 is built on DeepSeek-V3.2-Exp-Base and can be downloaded from Hugging Face .

Related Posts:

in AI, Posted by log1i_yk