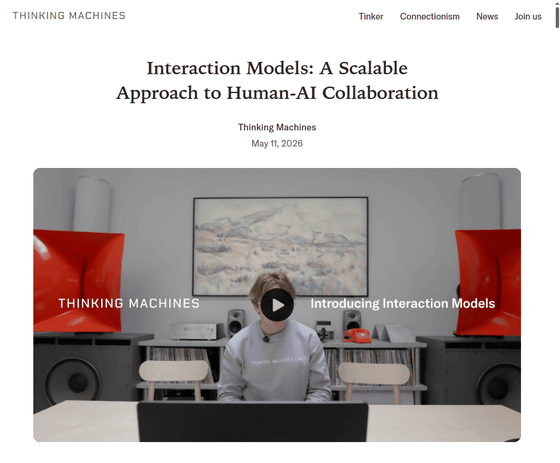

Thinking Machines Lab, newly established by the former CTO of OpenAI, has released a research preview of 'Interaction Models,' an AI that changes the input and output of AI from the existing turn-based system to real-time.

Interaction Models: A Scalable Approach to Human-AI Collaboration - Thinking Machines Lab

https://thinkingmachines.ai/blog/interaction-models/

Introducing interaction models | Thinking Machines Lab - YouTube

Existing AI research often treats the ability of AI to operate autonomously as the most important feature of an AI model. As a result, existing AI models and interfaces are not optimized for the assumption that humans will continue to be involved in the decision-making process.

Autonomous interfaces have value, but in real-world business, users cannot fully specify requirements in advance and leave everything to the AI. For good results, a collaborative process where humans are constantly involved, providing clarification and feedback along the way, is essential. However, humans are being excluded from AI-driven workflows. This isn't because humans aren't needed for the work, but because the interface simply doesn't have room for human involvement.

Rather, humans need to be able to collaborate with AI in the same way they collaborate with other people. That is, they need to be able to send messages, talk, listen, see, show, and offer opinions as needed.

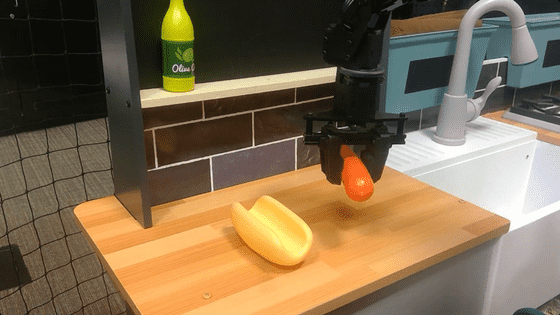

To address these challenges, 'Interaction Models' were developed, AI models designed for collaboration between AI and humans. Interaction Models can process interactions natively without relying on external frameworks. They can continuously input audio, video, and text, enabling them to think, respond, and act in real time.

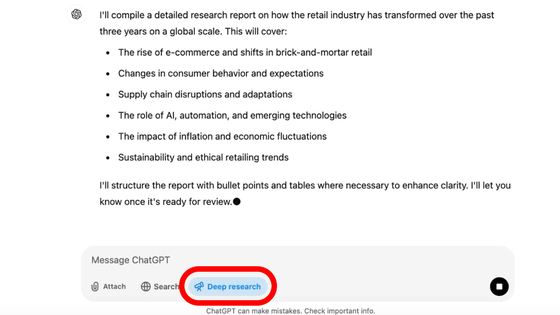

Regarding the problems that AI has faced until now, Thinking Machines Lab explains, 'We needed to move away from turn-based interfaces.' Existing AI models wait without recognizing what the user is doing or how they are doing it until the user has finished inputting or speaking. Then, the AI model stops recognizing and does not accept new input until it has finished generating in response to the user's input. Thinking Machines Lab explains that this hinders collaboration between humans and AI, and prevents human knowledge, intentions, and judgments from being correctly conveyed to the AI model.

Thinking Machines Lab explains that by enabling real-time conversations with AI, they aim to transform it from a system where humans are forced to adapt to the AI interface, into one where AI can adapt to human input and output.

Thinking Machines Lab explained the need for Interaction Models, stating, 'Most existing AI models add interactivity as an afterthought using harnesses. In other words, they connect components and mimic interruption, multimodality, and parallelism. However, these manually constructed systems are falling behind the advancements in general-purpose functionality. For interactivity to scale with intelligence, interactivity itself must be part of the AI model. This approach allows us to extend AI models and make them smarter and better collaborators.'

The most distinctive feature of Interaction Models is its seamless dialogue management capabilities. Interaction Models can track whether the user is thinking, letting the AI speak, or seeking a response. You can see how Interaction Models interacts with humans in the video below.

Dialog management | Thinking Machines Lab - YouTube

Furthermore, Interaction Models can intervene in conversations not only when the user has finished speaking, but also depending on the situation or as needed.

The following video demonstrates how Interaction Models react when they visually detect that a user sitting in front of a PC is slouching in their chair.

Visual interjection | Thinking Machines Lab - YouTube

In the following video, a user discusses a children's cycling trip while conversing with Interaction Models. Even if the user speaks to the Interaction Models while they are speaking, the Interaction Models can consider the user's utterances and adjust their output accordingly. The real-time interaction is just like a conversation between two people.

Audio interjection | Thinking Machines Lab - YouTube

In the following video, Interaction Models translate the speech of two speakers speaking different languages in real time. When a user uses offensive language that is inappropriate for collaboration, Interaction Models can translate the conversation in real time to make it more appropriate for the situation.

Simultaneous speech | Thinking Machines Lab - YouTube

In the following video, three users are tested on their general knowledge using Interaction Models. Interaction Models are able to accurately consider the users' answers while simultaneously counting down numbers.

Time-awareness | Thinking Machines Lab - YouTube

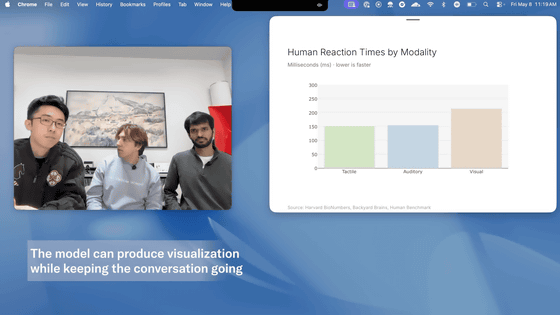

Interaction Models can create diagrams and graphs in real time while searching for information on the internet during conversations with users, and can weave them into the conversation as needed.

Generative UI | Thinking Machines Lab - YouTube

Interaction Models can think, process, and retrieve information while conversing with users. As shown in the video below, they can even provide recommendations for movies currently showing.

Search | Thinking Machines Lab - YouTube

In longer sessions, everything happens continuously, so it feels less like 'giving instructions to the AI' and more like 'working together with the AI.'

Diet manager | Thinking Machines Lab - YouTube

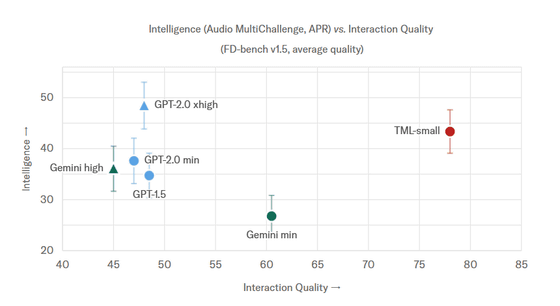

The graph below summarizes the results of comparing Interaction Models' intelligence, command following ability, and response quality with existing AI models. The vertical axis of the graph shows the Audio MultiChallenge score, a benchmark for AI intelligence and command following ability, while the horizontal axis shows the Full-Duplex-Bench v1.5 (FD-bench v1.5) score, a benchmark that evaluates response quality, which assesses how naturally real-time speech AI can handle 'speech overlap' and interruptions with humans. It is clear that Interaction Models (TML-Interaction-Small) has significantly superior performance compared to existing AI models, especially in terms of response quality.

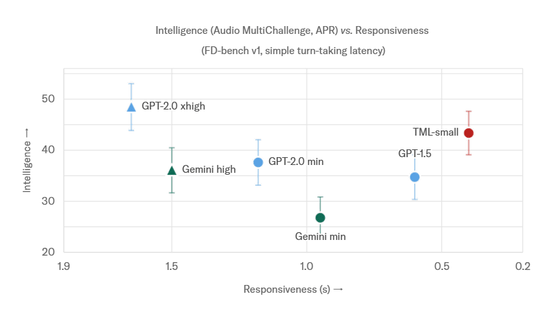

The following graph summarizes the results of evaluating the AI's intelligence using Audio MultiChallenge (vertical axis) and its responsiveness using FD-bench v1 turn acquisition latency (horizontal axis). Responsiveness, or the output speed of the AI, is shown, and it is clear that the response speed is extremely fast compared to existing AI models.

Thinking Machines Lab plans to release a limited research preview version in the next few months to gather feedback, with a public release planned for 'sometime in 2026.'

Related Posts: