Engineers argue that we shouldn't rely solely on the cloud for all AI functions, and that 'local AI should be the standard.'

IT engineer Cyrus Lopez has posted an article arguing that we should not rely too easily on cloud AI, but rather make local AI running on the device the standard. In the article, he points out that while the trend of integrating OpenAI and Anthropic APIs into apps may seem convenient, they are fragile, pose a significant privacy risk, and are complex to operate.

Local AI Needs to be the Norm - unix.foo

In Lopez's opinion, the moment an app sends user text or article content to an external AI service, it transforms from a mere convenience feature into a service burdened with data storage, consent, auditing, data leaks, and government disclosure requests. Furthermore, AI functions that require external communication are affected by network conditions, AI provider service outages, API restrictions, billing status, and the state of the company's own servers. Lopez criticizes this, arguing that companies are creating costly distributed systems when they only intended to create a simple summarization function.

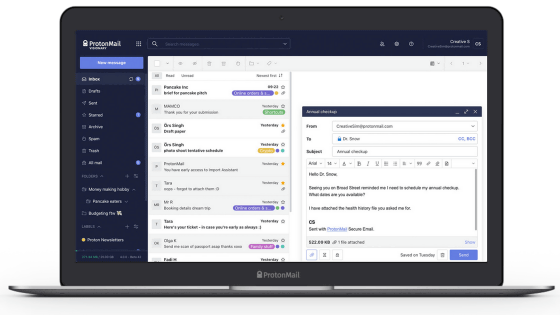

As a concrete example of using local AI, Lopez cites his own news aggregation service, 'The Brutalist Report,' an iOS app. The Brutalist Report generates article summaries on the device using Apple's Local Model API . No data is sent to a server, prompts and user logs are not sent externally, and it can operate without an AI provider account. Since the article text that the user is reading is on the device, the article summary can also be done on the device.

Furthermore, Lopez points out that local AI is better suited to transforming user data rather than searching for knowledge from around the world. Summarizing emails, extracting tasks from notes, classifying documents, and organizing text are all practical tasks that can be handled by small AIs on smartphones rather than massive cloud AI systems. Lopez states that the way to gain user trust is not by writing long privacy policies, but by designing systems from the outset to prevent user data from being shared externally.

While acknowledging that 'local AI is not as powerful as cloud AI,' Lopez states that many app functions do not require the capabilities of state-of-the-art models. What is needed is the ability to reliably perform summarization, classification, extraction, rewriting, and normalization of variations in spelling.

Lopez is not entirely rejecting cloud AI, acknowledging that there are situations where high-performance AI models on the cloud are truly necessary. However, he emphasizes that 'there is no need to send user data to an external server for AI functions that can be processed on the device.' Lopez concludes the article by arguing that user data should remain where it belongs, on the user's device, and that AI functions should be treated as stable, in-app processing functions rather than simply being embedded as a chat box.

Related Posts: