SubQ, an efficient AI model designed to outperform Claude Opus in processing long contexts, has emerged, capable of handling 12 million tokens and breaking the limits of Transformer.

AI development company Subquadratic has announced its AI model, ' SubQ .' SubQ is a model developed with an architecture different from mainstream Transformer-based AI models and boasts a massive context window of up to 12 million tokens. Furthermore, its test model, 'SubQ 1M-Preview,' significantly outperforms Claude Opus 4.7 in processing performance when inputting large amounts of tokens.

Subquadratic — Efficiency is Intelligence

Introducing SubQ: The First Fully Subquadratic LLM

https://subq.ai/introducing-subq

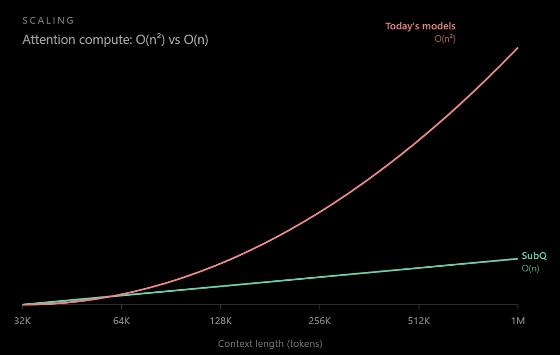

At the time of writing, mainstream AI models are developed based on a machine learning architecture called ' Transformer .' According to Subquadratic, Transformer-based AI models have a problem in that 'as the number of input tokens increases, the amount of computation required increases proportionally to the square of the number of input tokens.' Subquadratic's AI model, 'SubQ,' employs a different, more efficient architecture than Transformer, and its computational complexity increases proportionally to the number of input tokens. In other words, it becomes more efficient than existing AI models as the number of input tokens increases.

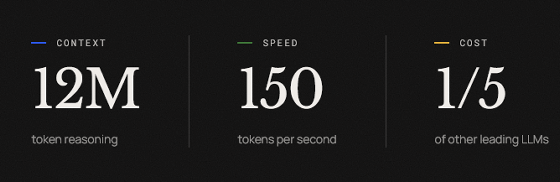

SubQ supports input of up to 12 million tokens and operates 150 times faster per token than existing AI models while reducing costs to one-fifth.

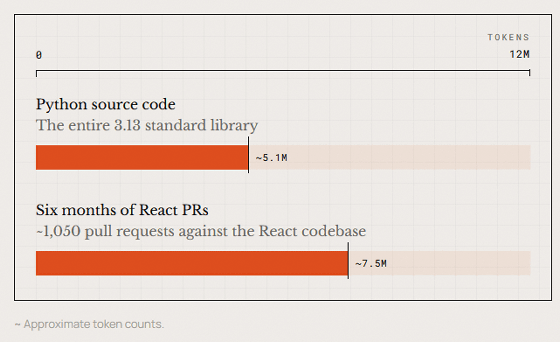

For reference, the total number of tokens in the entire source code of Python 3.13, including the standard libraries, is 5.1 million, while the total number of tokens in pull requests submitted to the React development project over six months is 7.5 million. SubQ can process all of this code at once.

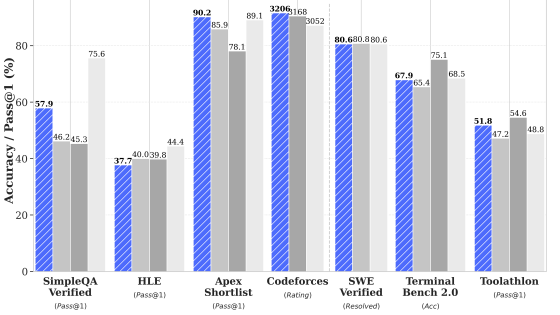

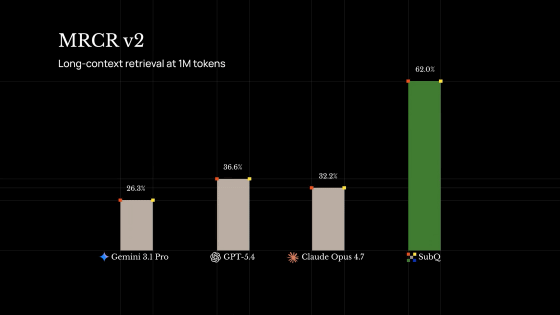

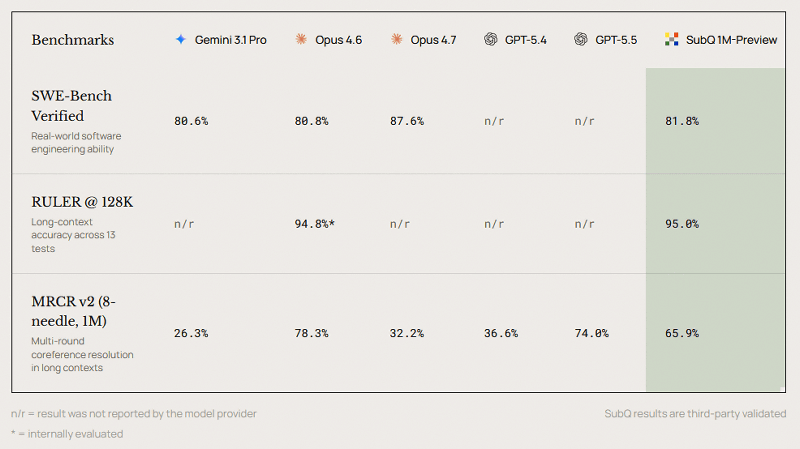

The table below compares the benchmark results of SubQ 1M-Preview, a test version of SubQ, with 'Gemini 3.1 Pro,' 'Claude Opus 4.6,' 'Claude Opus 4.7,' 'GPT-5.4,' and 'GPT-5.5.' SubQ 1M-Preview recorded a score higher than Gemini 3.1 Pro and Claude Opus 4.6 in SWE-Bench Verified, which measures coding agent performance. It also outperformed Claude Opus 4.7 in the MRCR v2 1 million token input test, a benchmark that evaluates long-text comprehension ability.

SubQ offers its coding agent SubQ Code and information analysis AI SubQ Search in private beta, and early access to its API has also begun.

Related Posts:

in AI, Posted by log1o_hf