Anthropic co-founder explains why there's a more than 60% chance that AI systems will autonomously build successor systems by the end of 2028.

Anthropic's multi-agent system, '

Import AI 455: AI systems are about to start building themselves.

https://importai.substack.com/p/import-ai-455-automating-ai-research

I've spent the past few weeks reading 100s of public data sources about AI development. I now believe that recursive self-improvement has a 60% chance of happening by the end of 2028. In other words, AI systems might soon be capable of building themselves.

— Jack Clark (@jackclarkSF) May 4, 2026

Clark stated that, citing the rapid advancements in AI coding capabilities and autonomous research capabilities in recent years, he believes 'we are living in an era where AI research will be end-to-end automated.' He then pointed out that 'as this progress continues, we will cross the Rubicon into an unpredictable future (a point of no return),' and asserted that there is a greater than 60% chance that AI systems powerful enough to autonomously build successor systems will be realized by the end of 2028.

Clark cited the rapid improvement in AI capabilities in recent years as evidence. For example, in ' SWE-Bench ,' a measure of software development capabilities, the highest score in 2023 was around 2%, recorded by Claude 2, while ' Claude Mythos Preview ,' released in April 2026, achieved 93.9%. While some point out that there are significant problems with some versions of SWE-Bench and doubts about whether they can significantly distinguish between the top scores and the higher scores, there is no doubt that the performance has improved dramatically in just a few years.

Furthermore, estimates by METR , a research organization focused on evaluating the ability of AI systems to conduct research and development on the AI systems themselves, indicate that the amount of time AI can work continuously without human intervention is also rapidly increasing. In 2022, GPT-3.5 could only handle tasks on the scale of about 30 seconds, while in 2024, GPT-4.01 increased to 4 minutes, and in 2025, GPT 5.2 (High) achieved about 6 hours. And in February 2026, Claude Opus 4.6 was announced, which is said to have reached the stage where it can perform tasks on the scale of about 12 hours.

Anthropic announces Claude Opus 4.6, improving performance not only for coding but also for financial processing and document creation, and supporting context windows with up to 1 million tokens - GIGAZINE

Ajeya Kotla, who has been making AI predictions at METR for many years, said that the progress in AI has been so remarkable that the predictions he made in January 2026 seem conservative just two months later. He then predicted that 'by the end of 2026, the processing time of AI systems will exceed 100 hours.'

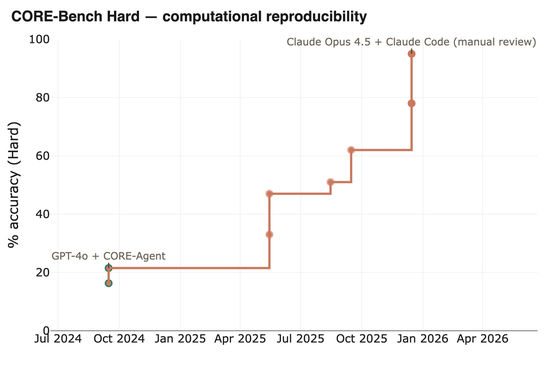

According to Clark, much of the work of AI researchers boils down to painstaking tasks that humans would spend hours on, such as cleaning data, reading data, and starting experiments, rather than flashes of genius. He believes that once AI's continuous processing time increases sufficiently, all of the work of AI researchers will fall within that range. For example, one of the important tasks in AI research is reading scientific papers and reproducing the results, and AI processing power has improved so much that it has been reported that the CORE-Bench, a computational reproducibility agent benchmark, has reached 95.5% in about a year, up from its highest score of approximately 21.5% at the time of its release.

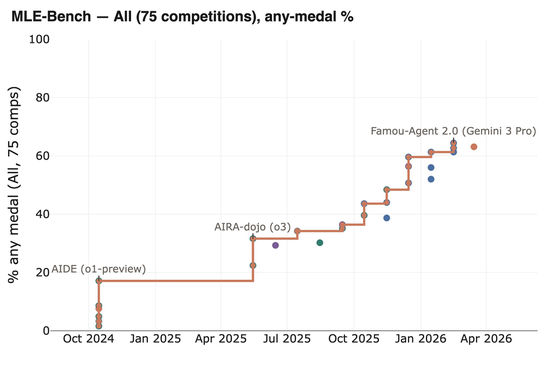

Similar advancements can be seen in

Furthermore, Clark points out that AI is already beginning to partially automate AI research itself. In a proof-of-concept study of automated alignment research published by Anthropic in April 2026, an experiment was conducted to test whether humans can adequately manage rapidly advancing AI. In the experiment, under the supervision of either a human or Claude Opus 4.6, the AI was fine-tuned to provide the best possible answers for itself, and the extent to which its performance improved was recorded. As a result, while the score under human supervision was 0.23, Claude Opus 4.6 ultimately achieved a score of 0.97, recording a significantly higher score. Anthropic cautions that 'the fact that AI improved its score does not mean that cutting-edge AI has already become an AI alignment scientist,' but it does suggest the possibility of a future where AI monitors AI.

Can humans keep track of ever-so-intelligent AI? Anthropic conducts an experiment to monitor AI with AI - GIGAZINE

On the other hand, Clark also warns of the possibility that such progress could lead to 'recursive self-improvement.' If AI begins to accelerate its own research and development, a fundamental 'compound error' that can occur in common across a wide range of problems may arise, and even small errors can accumulate in the process of AI repeatedly generating AI. Even if a system that is '99.9% accurate' is created, there is a theoretical concern that its accuracy will decrease to 95.12% after 50 generations and to 60.5% after 500 generations, meaning that AI cannot maintain accuracy simply by continuing to manage AI. Furthermore, if AI progresses to a level where it is taken for granted that AI builds AI, it will have an extremely large impact on the economic and social structure, potentially leading to various social and political problems.

Based on all this data and insights, Clark concludes that 'there is about a 60% chance that by the end of 2028, automated AI research and development—that is, state-of-the-art models autonomously learning successor versions—will be possible.' The probability of this happening in 2027 is only about 30%, because AI systems in 2026 have not yet demonstrated the creativity and unconventional insights necessary for AI research in an innovative and significant way, and therefore further progress is needed. Clark also states that if this does not happen by the end of 2028, it will be seen as revealing a fundamental flaw in the current technological paradigm, and some kind of invention will be necessary to make it happen.

Related Posts:

in AI, Posted by log1e_dh