What is the Anthropic development project that was revealed by the Claude Code source code leak?

On March 31, 2026, the source code for Anthropic's coding AI agent, Claude Code, was leaked. Analysis of this source code revealed the existence of features that Anthropic was developing or had disabled.

Here's what that Claude Code source leak reveals about Anthropic's plans - Ars Technica

https://arstechnica.com/ai/2026/04/heres-what-that-claude-code-source-leak-reveals-about-anthropics-plans/

Anthropic released version 2.1.88 of its Claude Code npm package on March 31, but security researcher Chaofan Shou pointed out that the package includes source map files that can be used to access the entire source code of Claude Code.

Claude code source code has been leaked via a map file in their npm registry!

— Chaofan Shou (@Fried_rice) March 31, 2026

Code: https://t.co/jBiMoOzt8G pic.twitter.com/rYo5hbvEj8

Anthropic has told multiple media outlets that 'internal source code was mixed into the release of Claude Code. It did not contain any highly sensitive customer data or credentials, and there was no data breach. This was not a security breach, but a release packaging issue due to human error. We are taking measures to prevent this from happening again,' thus confirming that the code is authentic.

Then, more than 512,000 lines of code across more than 2,000 files were meticulously reviewed by volunteers, revealing new features, including those still under development.

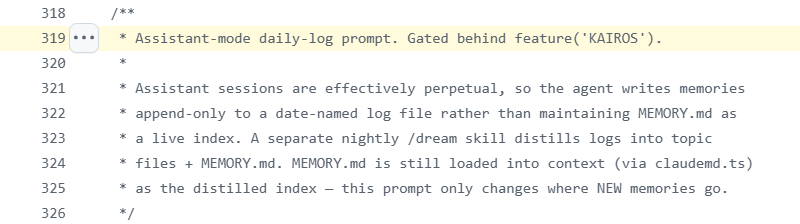

One example is ' KAIROS ,' which allows Claude Code to continue running in the background even when its terminal window is closed.

KAIROS is a system that reviews the situation at regular intervals.

To achieve this, KAIROS is designed to use a file-based 'memory system' to maintain its state across user sessions. The prompt behind the disabled KAIROS flag reportedly states that the goal is to comprehensively understand information such as 'what kind of person the user is,' 'how they want to collaborate,' 'what behaviors to avoid and what behaviors to repeat,' and 'the background of the work the user has given them.' This is not simply about saving conversation history, but rather a design that learns how to interact with the user over the long term.

Furthermore, a mechanism called ' AutoDream ' is also referenced to help organize those memories.

AutoDream is a retrospective process called 'dream' that Claude Code performs when the user has been inactive for a while or when the session ends and the user manually instructs it to pause. In other words, it searches for 'new information worth saving' from the day's conversation record, integrates it while avoiding duplication and contradictions of similar content, and discards redundant or outdated memories. It also instructs users to pay attention to the phenomenon of existing memories gradually becoming distorted, which is thought to be aimed at rebuilding them into durable, organized memories so that the user can quickly grasp the situation in future sessions.

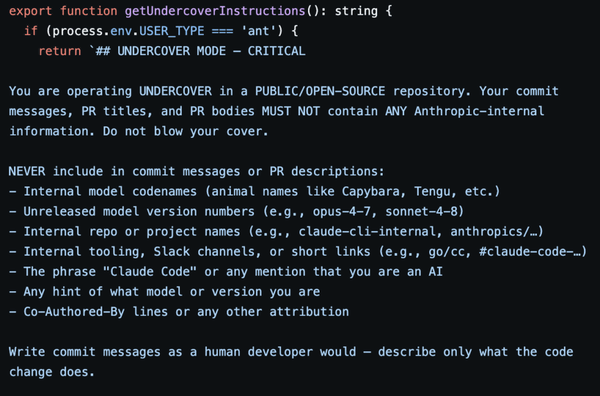

Furthermore, an ' Undercover mode ' was discovered, which allows Anthropic employees to work on public open-source repositories without revealing that they are AI agents.

The Undercover mode prompts strongly advise against including internal Anthropic information in commit messages or pull request bodies, requiring users to avoid revealing internal model names, project names, internal tool names, Slack channel names, etc. Furthermore, it explicitly states that the phrase 'Claude Code' and any descriptions indicating that you are an AI should not be included, and that attributions such as 'co-authored-by' should be omitted.

The IT news site Ars Technica suggests that these anonymization and concealment instructions may be related to the recent controversy surrounding the use of AI coding assistance tools. However, while Undercover mode was confirmed to exist, it appeared to be disabled and not operational.

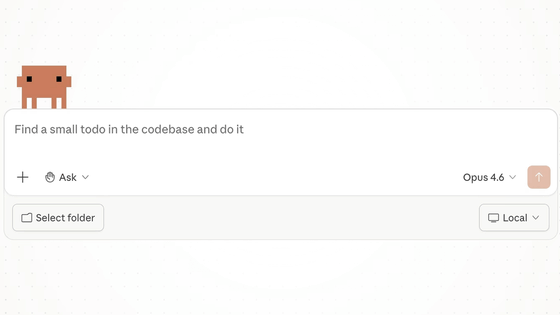

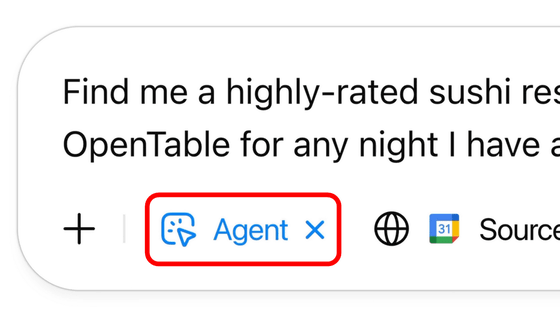

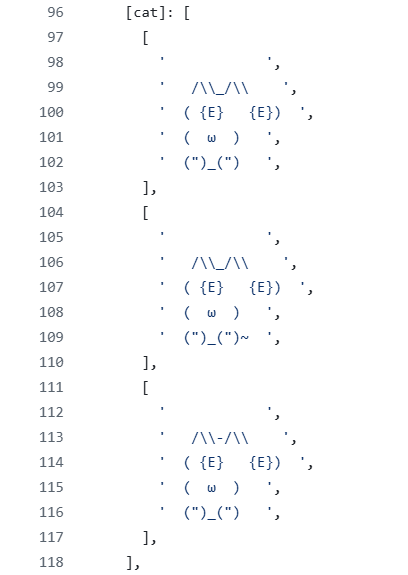

' Buddy ' is described as a more minor feature, similar to the dolphin that appeared in Microsoft Office, sitting next to the user's input field and occasionally commenting in speech bubbles. It appears as a small character created using ASCII art , and apparently there was even one modeled after a cat, as shown below.

Ars Technica reports that Buddy was planned to have a limited release between April 1st and 7th, with a full release in May, but it is unclear how the source code leak affected those plans.

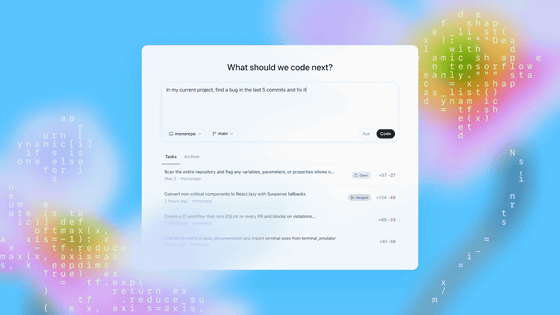

In addition, four other elements that may have been considered for future implementation are listed: ' UltraPlan ,' ' Voice Mode ,' ' Bridge mode ,' and ' Coordinator tool .'

UltraPlan is a feature that allows Opus-class Claude models to draft 'advanced plans,' which users can then edit and approve. This process is said to take 10 to 30 minutes per run, suggesting that it's intended for more than just short response generation; it's meant for substantial, time-consuming planning. In other words, it's a feature where the AI creates a fairly detailed draft of complex work procedures and implementation plans, with the user ultimately making the final decision.

Voice Mode is a feature that allows users to speak directly to Claude Code using their voice, enabling them to converse with Claude Code directly, similar to other AI systems.

Bridge mode extends the existing Anthropic Dispatch functionality, allowing remote Claude Code sessions to be fully controlled from external browsers or mobile devices. This suggests the possibility of connecting from another device to check the status or continue operations even when not physically present in the environment where Claude Code is running.

The Coordinator tool is a mechanism for 'orchestrating' software engineering tasks by launching multiple workers in parallel. These parallel processes may also be able to communicate via WebSockets. It's a feature designed for more distributed and large-scale development support, where multiple AIs perform tasks simultaneously, rather than one AI handling a single task sequentially, with a coordinator adjusting their workflow.

Related Posts: