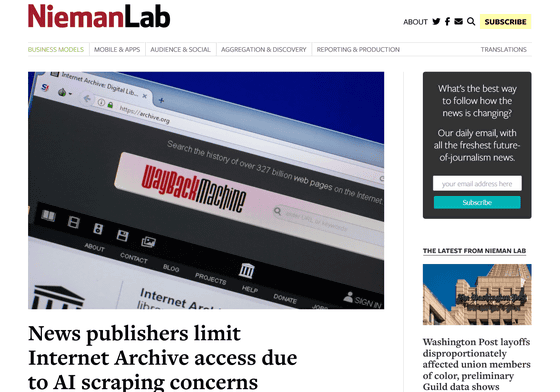

News media companies restrict access to Internet archives due to concerns about AI data collection

The Internet Archive, which collects and preserves vast amounts of online content, also stores page versions and dates and times, and the total number of stored web pages exceeded 1 trillion in October 2025. Many of the pages stored by the Internet Archive are accessible through the public tool Wayback Machine, but several news publishers have taken steps to restrict access to the Internet Archive due to concerns that the Internet Archive's commitment to free information access could be used as training data for AI.

News publishers limit Internet Archive access due to AI scraping concerns | Nieman Journalism Lab

https://www.niemanlab.org/2026/01/news-publishers-limit-internet-archive-access-due-to-ai-scraping-concerns/

Robert Hahn, head of business affairs and licensing at The Guardian, a major British magazine, said that while investigating bots attempting to extract content from The Guardian, he discovered through access logs that the Internet Archive was frequently crawling the site. 'Many AI companies are looking for readily available, structured content databases,' Hahn said. 'The Internet Archive's API would have been a great place for them to connect their machines and extract copyrighted texts.'

As a result, The Guardian has removed access to certain APIs and article pages from the Wayback Machine index, preventing the article text from being retrieved in a structured form. Regarding the reason for not completely blocking them, Hahn said, 'We support the nonprofit's mission of democratizing information.'

The New York Times also revealed that at the end of 2025, it added the Internet Archive crawl bot name 'archive.bot' to its site's robots.txt file, hard blocking article crawling. A New York Times spokesperson said, 'We believe in the value of human-led journalism at The New York Times and always want to ensure that our intellectual property is accessed and used lawfully. The Wayback Machine provides free access to content without permission by any entity, including AI companies, so we are blocking access by the Internet Archive bot.'

To find out whether other news media are adopting similar blocking measures, the Nieman Lab , a journalism institute at Harvard University, conducted a study by reading the robots.txt files of a database of 1,167 news sites. The results revealed that 241 news sites in nine countries explicitly block at least one of the Internet Archive's four crawler bots. This data is exploratory, based on a list of news sites, 76% of which are based in the United States, and does not represent comprehensive trends across the global industry. Furthermore, the study found that 240 of the 241 sites also target an archive called ' Common Crawl ,' which is closely related to AI training.

Common Crawl, a non-profit organization that has built an archive of AI learning sources such as OpenAI, has been scraping billions of web pages, including paid pages, since 2013 - GIGAZINE

'The Internet Archive is widely seen as a 'good' organization, but it's being used by 'evil' organizations like OpenAI,' said Michael Nelson, a computer scientist at Old Dominion University . 'We're collateral damage in a world where everyone hates AI control.'

When asked about the blocking by news media, Brewster Kahle, founder of the Internet Archive, expressed concern that 'if publishers restrict libraries like the Internet Archive, it could prevent the public from accessing historical records, which could hinder the Internet Archive's efforts to combat information chaos.' In an October 2025 Mastodon post, Kahle stated, 'While the Internet Archive's open datasets welcome bulk downloads, there are many collections that cannot be downloaded by users,' and mentioned measures to control information access through filtering and restrictions.

Related Posts:

in AI, Web Service, Posted by log1e_dh