TimeCapsule LLM, a large-scale language model trained exclusively on data from 1800 to 1875

Training a large-scale language model requires a large dataset, but if the dataset is biased, the output results and behavior will also be biased. Taking advantage of this, TimeCapsule LLM is a large-scale language model trained using only a dataset covering the limited period from 1800 to 1875.

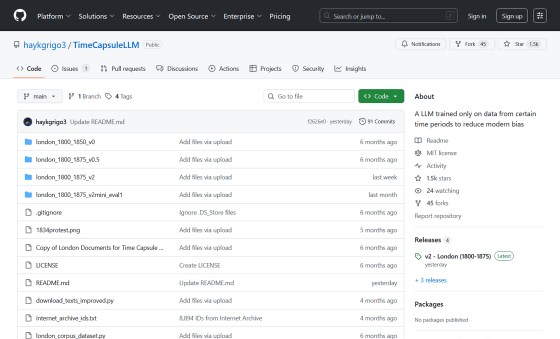

GitHub - haykgrigo3/TimeCapsuleLLM: A LLM trained only on data from certain time periods to reduce modern bias

TimeCapsule LLM is a large-scale language model trained exclusively on datasets from 1800 to 1875, with the aim of eliminating various biases and values that exist today and imitating the tone, vocabulary, and worldview of the time. Developer Hayk Grigorian (haykgrigo3) writes, 'Imagine what would happen if an AI model were actually historical, rather than just pretending to be historical.'

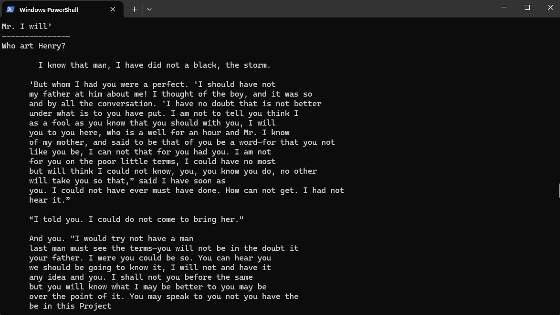

Versions 0 and 0.5 of TimeCapsule LLM were built on nanoGPT , a simplified and lightweight version of OpenAI's GPT model, and were trained with a very small dataset. Looking at the following exchange in version 0, we can see that the response to the input 'Who art Henry?' is in an archaic vocabulary reminiscent of the 1800s, but the content is incoherent.

Although version 0.5 significantly improved punctuation and grammar, it still had a very high incidence of hallucinations and still produced noise when applying OCR.

Version 1 of TimeCapsule LLM was built on

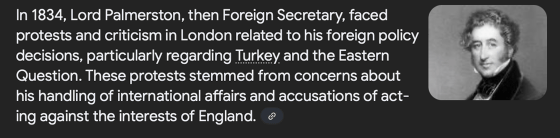

When I typed in 'It was the year of our Lord 1834,' the prompt that followed mentioned a protest in London. After researching this, I confirmed that there was indeed a protest against a man named Henry John Temple in London in 1834.

Additionally, version 2 is currently under development, which will train on a total of 90GB of text, including books, legal documents, newspapers, and other documents from London between 1800 and 1875.

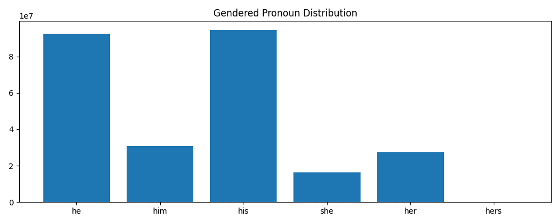

The data collected to develop Version 2 contains various biases that reflect the social climate of the time. The graph below shows the number of personal pronouns that appear in the dataset, and shows that masculine pronouns such as 'he/him/his' are overwhelmingly more prevalent than feminine pronouns such as 'she/her/hers.'

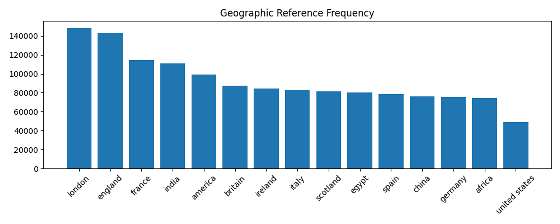

Below is a list of the number of place names mentioned in the dataset, with 'London' and 'England' being the most prevalent, and 'Africa' and 'United States' being less prevalent.

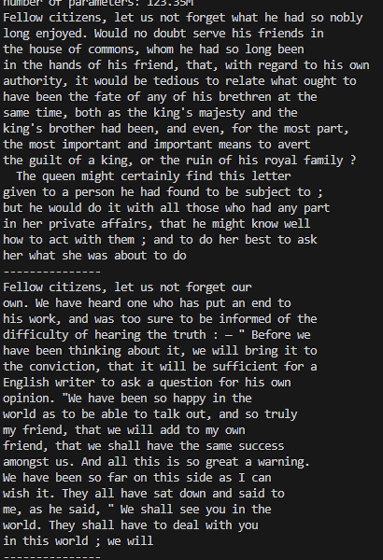

At the time of writing, there is no version 2 because the entire dataset has not yet been tokenized, but there is a 'v2mini-eval1/2' version trained using only 15GB of the 90GB data. Below is an example response from 'v2mini-eval1/2'.

Enter: Who is

Output: Who is Charles Dickens? Are his great and moving works more to be found in his plays or in the great corporate farms? Is there anything else to quote from Jack Pickett? Don't you know they were a galloping race, or the sawing of the chest, or what Jackson should have done when he was staring out the window? His son never told me what English success is, or how to achieve its weight. Have you ever made a path to the old road? Or to the point where you must leave us, the wind that blows at the bow of our ship? In fact, whether the wind blows up from the plains on Saturday night, or at noon, or blows north, or in any other direction, we are forced to describe a formidable barrier with the same effect as the present situation. It is fair to say that, at least in this situation, we have left room for it... (omitted)

TimeCapsule LLM has also been a hot topic on the social news site Hacker News.

TimeCapsuleLLM: LLM trained only on data from 1800-1875 | Hacker News

https://news.ycombinator.com/item?id=46590280

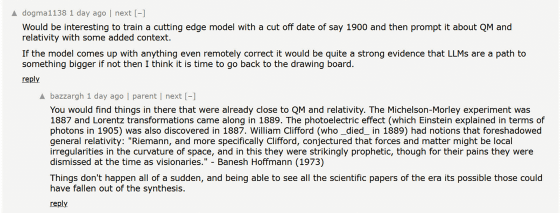

One user argued that 'if we can train a state-of-the-art model on a dataset going back to 1900 and then output correct results about quantum mechanics and the theory of relativity with additional context, it would be evidence that large-scale language models produce greater discoveries.' The special theory of relativity was published in 1905, so if an AI trained only on information from years older than the publication could derive the theory of relativity, it could be said that AI has the 'power to make scientific breakthroughs.'

Related Posts:

in AI, Posted by log1h_ik