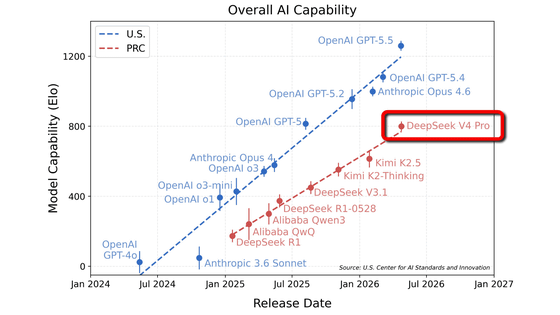

'DeepSeek V4 Pro is about eight months behind the leading AI models in the United States, but is currently the most powerful Chinese-made AI model,' reported CAISI, the U.S. government's AI risk management agency.

CAISI Evaluation of DeepSeek V4 Pro | NIST

https://www.nist.gov/news-events/news/2026/05/caisi-evaluation-deepseek-v4-pro

DeepSeek, a Chinese AI company, announced its latest AI model, 'DeepSeek-V4,' at the end of April 2026. DeepSeek-V4 comes in two models: DeepSeek-V4-Pro and DeepSeek-V4-Flash. DeepSeek-V4-Pro is a high-spec model with a total of 1.6 trillion parameters.

The 'DeepSeek-V4' has finally arrived, an open-top model with performance exceeding that of the Claude Opus 4.6 - GIGAZINE

CAISI conducted an evaluation of the open-weight model DeepSeek V4 Pro and pointed out that 'DeepSeek V4 Pro is about eight months behind the most advanced AI.'

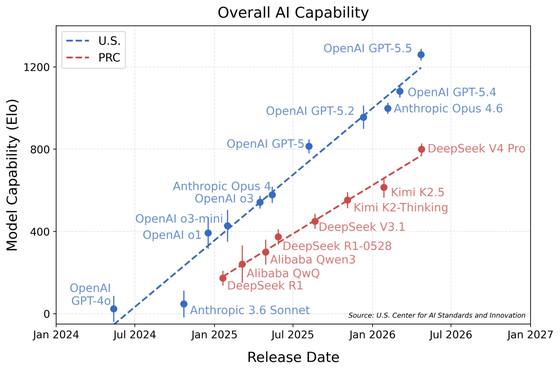

The graph below compares existing publicly available AIs based on a series of benchmarks covering five domains. The vertical axis of the graph shows the overall benchmark evaluation, with higher scores indicating better AI performance. The horizontal axis shows when each AI model was released. American-made AIs are shown in blue, and Chinese-made AIs in red. The graph shows that DeepSeek V4 Pro, released in April 2026, performed comparably to GPT-5, which OpenAI released in August 2025, eight months earlier.

DeepSeek V4 Pro has achieved a score approximately 200 points higher than Kimi K2.5, which previously held the record for the highest-scoring Chinese-made AI. In a benchmark conducted by CAISI that covered five domains, a 200-point increase in the overall score means that the probability of solving a specific task is three times higher.

Furthermore, the CAISI evaluation employs an approach inspired by

The benchmark tests performed were as follows:

◆Cyber-related

CTF-Archive-Diamond: A private benchmark that measures practical hacking skills, specifically the ability to disrupt systems and exploit vulnerabilities.

◆ Software Engineering Related

SWE-Bench Verified : A benchmark test to measure the programming capabilities of AI.

PortBench: A private benchmark for measuring the software portability capabilities of AI.

◆ Related to natural sciences

FrontierScience : A benchmark for measuring the scientific reasoning capabilities of AI at the research level.

GPQA-Diamond : A benchmark for measuring expert-level scientific knowledge and reasoning abilities in AI.

◆ Related to abstract inference

ARC-AGI-2 semi-private: A semi-private evaluation set of the ARC-AGI-2 benchmark test, published by the ARC Prize Foundation , which promotes benchmarks for artificial intelligence (AGI).

◆Mathematics related

OTIS-AIME-2025 : An AI mathematical inference benchmark using extremely difficult problems related to the International Mathematical Olympiad.

PUMaC 2024: Problems from the 2024 PUMaC competition, a mathematics contest for university students.

SMT 2025: A benchmark test to measure the mathematical reasoning capabilities of AI.

It appears that DeepSeek, the developer, had self-reported a higher score than the one CAISI obtained from benchmark tests conducted with the DeepSeek V4 Pro.

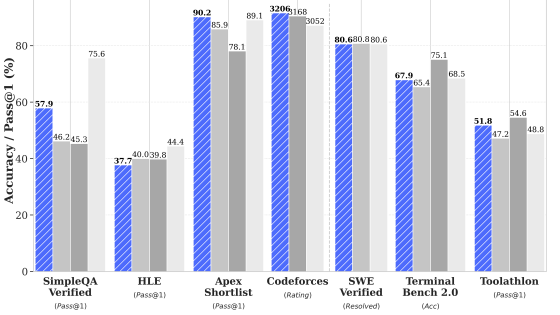

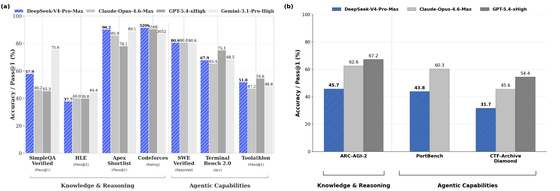

In the graph below, (a) shows the benchmark test results published by DeepSeek, and (b) shows the benchmark test results conducted by CAISI. Based on DeepSeek's self-reported benchmark test scores, DeepSeek V4 Pro performs comparably to Claude Opus 4.6 and GPT-5.4, which were released in March 2026 (two months ago). However, in the benchmark test results conducted by CAISI, it only achieved a score equivalent to GPT-5, which was released in August 2025 (eight months ago).

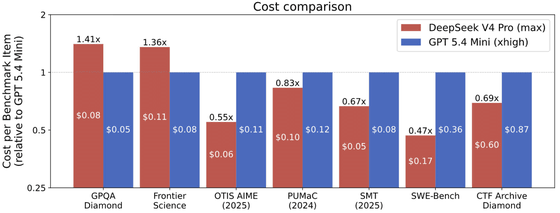

On the other hand, CAISI has rated DeepSeek V4 Pro as 'more cost-effective than other AI models with comparable performance.'

Among American-made AIs, OpenAI's GPT-5.4 mini (blue) is the most cost-effective. Compared to this model, DeepSeek V4 Pro (red) is shown to be more cost-effective in 5 out of 7 benchmark tests. When comparing all 7 benchmark tests, DeepSeek V4 Pro was 41-53% more cost-effective than GPT-5.4 mini.

According to developer reports, the token prices for DeepSeek V4 Pro are $1.74 (approximately 270 yen) per million tokens for input token cost (no cache), $0.0145 (approximately 2.3 yen) per million tokens for input token cost (with cache), and $3.48 (approximately 550 yen) per million tokens for output token cost. In contrast, GPT-5.4 mini has an input token cost of $0.75 (approximately 120 yen) per million tokens for input token cost (no cache), $0.075 (approximately 12 yen) per million tokens for input token cost (with cache), and an output token cost of $4.50 (approximately 710 yen) per million tokens.

Regarding the reasons why two benchmark tests, PortBench and ARC-AGI-2 semi-private, were not used in the cost-efficiency comparison, CAISI explained that 'PortBench is not yet supported in CAISI's cost comparison methodology' and 'ARC-AGI-2 had technical issues in evaluating GPT-5.4 mini.'

Related Posts:

in AI, Posted by logu_ii