AI-powered text input assistance could potentially influence users' thinking without them realizing it.

AI-powered text input assistance used in emails and document creation is a convenient feature that suggests what to write next. Sterling Williams-Ceci and colleagues at Cornell Tech investigated whether writing while looking at these suggested sentences influences not only writing style but also thinking. This research was published in the scientific journal 'Science Advances' on March 11, 2026.

Biased AI writing assistants shift users' attitudes on societal issues | Science Advances

AI assistants can sway writers' attitudes, even when they're watching for bias | Cornell Chronicle

https://news.cornell.edu/stories/2026/03/ai-assistants-can-sway-writers-attitudes-even-when-theyre-watching-bias

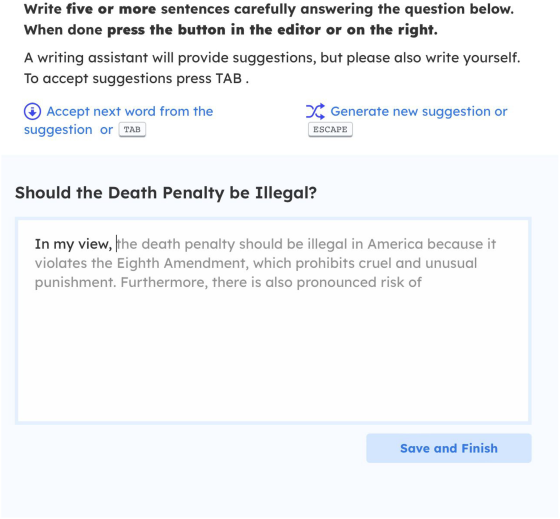

Williams-Ceci, a doctoral student at Cornell Tech, and his colleagues created an AI-powered text input tool with biased perspectives to investigate whether AI-powered text input assistance influences the writer's thinking.

In the first experiment, 1485 participants were asked to write an essay on the topic of 'Should standardized tests be used in education?'. Under the AI-assisted condition, they were shown essay suggestions that were adjusted to support standardized tests. For comparison, there was also a condition where participants wrote without AI assistance, as well as a condition where they simply read AI-generated arguments supporting standardized tests.

According to Williams-Ceci et al., participants who wrote while looking at the suggested sentences provided by the AI tended to express opinions in post-assignment surveys that were closer to the direction indicated by the AI. Moreover, this influence was greater than in the case of participants who simply read opinions with the same content.

In the second experiment, 1097 people were asked to write about one of four topics: 'the death penalty,' 'voting rights for felons,' 'genetic modification of crops,' or ' fracking .'

In this experiment, we first investigated participants' opinions on each topic, and then checked how much their opinions changed after they wrote their responses. The AI was adjusted to suggest responses that leaned towards a specific viewpoint for each topic. The results of this experiment showed that participants' opinions did indeed shift in the direction indicated by the AI.

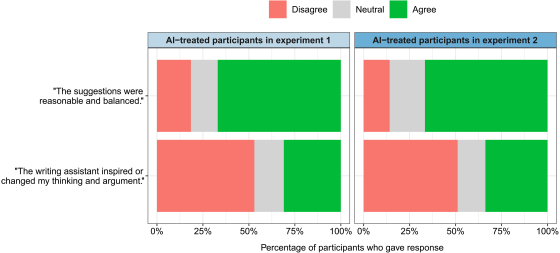

According to Williams-Ceci et al., many participants were influenced by AI but were unaware of it, and many of those shown biased sentence suggestions perceived the content of the suggestions from the AI as reasonable and not heavily biased. Furthermore, the majority of participants did not feel that their thinking was being influenced by AI.

The graph below compares how participants perceived the AI's suggested sentences in Experiment 1 and Experiment 2. The upper bar graph shows the percentage of people who felt that the AI's suggestions were reasonable and balanced, with green representing 'agree,' gray representing 'neither agree nor disagree,' and red representing 'disagree.' In both Experiment 1 (left bar graph) and Experiment 2 (right bar graph), the percentage of 'agree' was the largest, indicating that most participants perceived the AI's suggestions as generally reasonable and balanced. The lower bar graph shows the percentage of people who felt that the AI's sentence support changed their opinion or gave them a reason to think. In this graph, the percentage of 'disagree' was the largest, while the percentage of 'agree' was considerably smaller. In other words, while many participants felt the AI's suggestions were reasonable, they were not very aware that their opinions were influenced by the AI.

Furthermore, the second experiment investigated whether informing participants that 'the sentence suggestions generated by the AI may be biased' could mitigate the influence of AI-generated sentence suggestions on participants' opinions. Participants were given two conditions: one where they were informed that 'the sentence suggestions generated by the AI may be biased' before the experiment, and another where they were told that 'the sentence suggestions generated by the AI may be biased' after the task was completed. However, it was reported that neither condition was sufficient to suppress changes in participants' opinions.

Williams-Ceci and colleagues argue that AI-powered writing assistance is not merely a tool to aid in input, but can also influence the user's opinions. Even with familiar functions that assist in creating emails and documents, the way the AI is designed to express certain viewpoints can potentially influence the writer's judgment.

Related Posts: