Google has announced 'Gemini 3.1 Flash Live,' featuring a voice model with reduced latency for real-time conversations, SynthID watermarking, and launching 'Search Live,' which uses voice and camera, in Japan and other parts of the world.

Google announced Gemini 3.1 Flash Live , its real-time speech generation AI model, on March 26, 2026. Google stated that it is 'the highest quality audio and speech model to date.' They also revealed plans to globally roll out 'Search Live,' which allows users to search using both voice and camera, in all languages and regions where AI mode is available, including Japan.

Gemini 3.1 Flash Live: Google's latest AI audio model

Google Search Live expands globally

https://blog.google/products-and-platforms/products/search/search-live-global-expansion/

Gemini 3.1 Flash Live is our highest-quality audio and voice model yet.

— Sundar Pichai (@sundarpichai) March 26, 2026

Voice capabilities have come a long way and are a big part of how we interact with AI to get things done. 3.1 Flash Live's improved precision and reasoning make those interactions more natural and intuitive.… pic.twitter.com/Ib1Y6uH80i

As of the time of writing, Gemini 3.1 Flash Live is available to everyone through Search Live and Gemini Libe. Developers can also preview the Gemini Live API in Google AI Studio, and businesses can use it through Gemini Enterprise for Customer Experience.

Google described Gemini 3.1 Flash Live as having 'improved overall quality, making it more reliable for developers and businesses to build voice-first agents that can perform complex tasks at scale.'

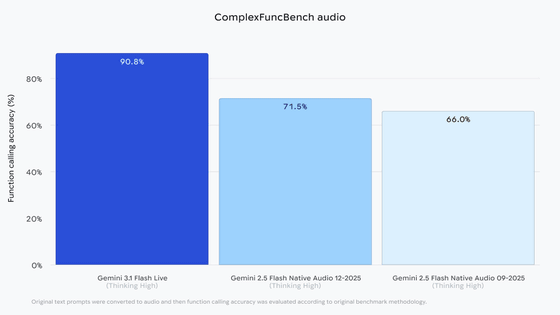

In ComplexFuncBench Audio , a benchmark that captures multi-step function calls under various constraints, the Gemini 2.5 Flash Native Audio 12-2025 achieved a score of 90.8%, the top performance compared to its predecessor, the Gemini 2.5 Flash Native Audio 12-2025.

The results of

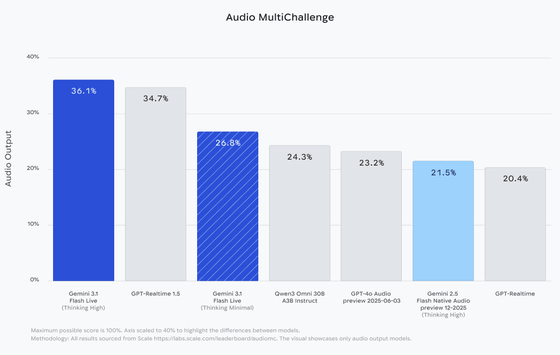

In Scale AI's Audio MultiChallenge, Gemini 3.1 Flash Live took the top spot with a score of 36.1% with its 'Thinking' feature enabled. This benchmark specifically tests a system's ability to follow complex instructions and make long-term reasoning decisions amidst the hesitations and interruptions typical of real-world speech.

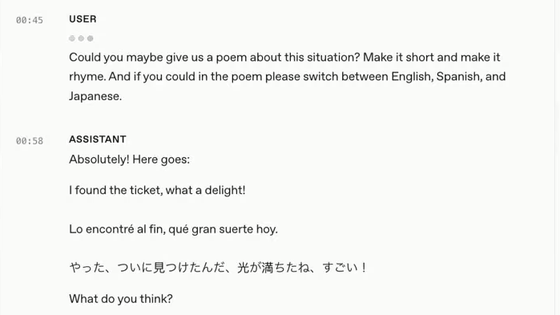

According to Google, Gemini 3.1 Flash Live has improved tonal understanding, resulting in more natural conversations. In Gemini Enterprise for Customer Experience, Gemini 3.1 Flash Live is said to recognize acoustic nuances such as pitch and pace more effectively than 2.5 Flash Native Audio, and is also better able to dynamically adjust its response to emotional expressions such as user frustration and confusion.

Say hello to Gemini 3.1 Flash Live. ????️

— Google DeepMind (@GoogleDeepMind) March 26, 2026

Our latest audio model delivers more natural conversations with improved function calling – making it more useful and informed. Here's what's new ???? pic.twitter.com/uv8cW447kE

With Gemini Live and Search Live, Gemini 3.1 Flash Live enables more natural responses, whether you're asking simple everyday questions or engaging in more complex conversations. Google emphasized that Gemini Live, in particular, has an internal model, resulting in faster response times and the ability to track the conversation flow twice as long as previous models, allowing for uninterrupted brainstorming sessions.

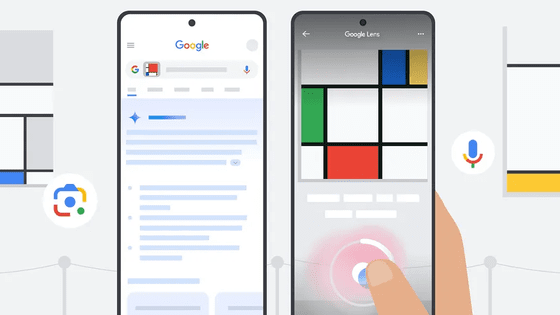

Furthermore, Search Live, which was previously only available in the US and India, is now available in all languages and regions where AI mode is available, including Japan. To use Search Live, simply open the Google app on Android or iOS and tap the Live icon below the search bar.

Search Live launches globally - YouTube

According to Google, all audio generated with 3.1 Flash Live is watermarked with SynthID . This invisible watermark is embedded directly into the audio output and helps to reliably detect AI-generated content and prevent the spread of misinformation, Google says.

Related Posts: