OpenAI Announces Codex Security, an AI Agent for Automating Vulnerability Discovery, Verification, and Fixing

OpenAI has announced Codex Security , an AI agent that automates code security reviews. It can identify complex vulnerabilities that other agent tools miss and suggest fixes, significantly improving system security.

Codex Security: now in research preview | OpenAI

https://openai.com/index/codex-security-now-in-research-preview/

We're introducing Codex Security.

— OpenAI Developers (@OpenAIDevs) March 6, 2026

An application security agent that helps you secure your codebase by finding vulnerabilities, validating them, and proposing fixes you can review and patch.

Now, teams can focus on the vulnerabilities that matter and ship code faster.… pic.twitter.com/t45Wkm7Rda

According to OpenAI, context is essential to assessing actual security risks, but most AI security tools only flag low-impact detection results or false positives, requiring human security teams to spend a great deal of time verifying the flags. At the same time, as software development accelerates with AI, security reviews have become a difficult and important issue to accelerate. To address these issues, OpenAI announced Aardvark, an AI agent that 'reads code like a human and takes security measures,' in October 2025.

OpenAI announces GPT-5-based vulnerability detection tool 'Aardvark,' already in operation within OpenAI - GIGAZINE

Aardvark was in production at OpenAI internally and launched in private beta with a small number of users. During the beta period, the quality of detection results improved significantly by reducing noise, improving severity accuracy, and reducing false positives. Codex Security is the latest evolution of Aardvark.

Codex Security began as Aardvark, launched last year in private beta.

— OpenAI Developers (@OpenAIDevs) March 6, 2026

Since then, we've significantly improved signal quality, reducing noise, improving severity accuracy, and lowering false positives, so findings better align with real-world risk. https://t.co/nGbXV5leVY

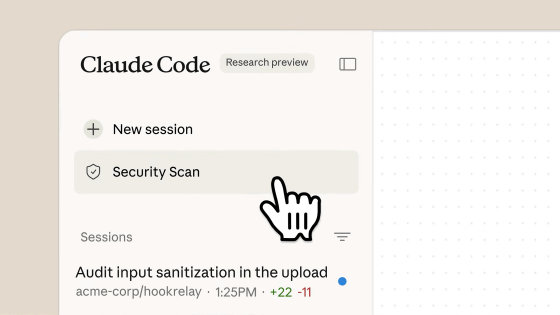

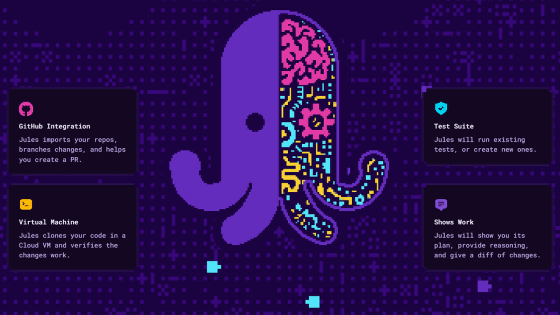

Codex Security combines inference from OpenAI's cutting-edge AI models with automated verification by Codex agents to provide highly reliable detection results and actionable fixes. Codex Security's features include:

・Understand the system structure and automatically create a threat model

When you configure a scan, it analyzes your repository to understand the system's structure and security-critical points. It then automatically generates a threat model for your project that outlines what the system does, who it trusts, and where it is vulnerable to attack. This threat model can also be edited by the user, allowing you to coordinate with the agent and your team.

・Detect vulnerabilities with priority and verify whether they are actually problems

We investigate code based on the threat model we created and detect vulnerabilities. We prioritize the issues we detect based on how much impact they have on the actual system, and when possible, we test them in a sandbox environment to confirm whether they are real issues (or false positives). This detailed testing reduces false positives and also generates working proofs of concept (PoCs) , providing security teams with stronger evidence and clearer remediation steps.

・Propose corrections that understand the context of the entire system

For discovered vulnerabilities, Codex Security suggests fixes that take into account the system's design intent and the relationship with the surrounding code. This allows fixes to be made while strengthening security and minimizing 'regressions' that break existing functionality. Users can also filter results to focus on the most critical issues.

According to OpenAI, Codex Security scanned more than 1.2 million external repositories in the beta cohort and identified 792 critical findings and 10,561 relatively high-severity findings. Less than 0.1% were classified as 'critical issues,' indicating that the system minimizes the problem of 'detecting a large number of issues and increasing reviewer burden.' OpenAI states, 'This will enable development teams to focus on critical vulnerabilities and release secure code more quickly.'

Sean Moriarty, a developer who has actually used Codex Security, gave it a high rating, saying, 'I ran it on our code base and it scanned about 5,000 commits over 24 hours, finding 275 issues. I've already implemented 15 of the fixes Codex Security suggested in my code, and most of the code was still usable without any modifications. The threat model created by Codex Security is very accurate and detailed, and I feel that the detection accuracy is also very high. I plan to share accurate statistics once I've verified all the results.'

We ( @get_mocha ) got access to this and ran it on our codebase yesterday. It took ~24 hours and scanned almost 5000 commits, and found 275 issues. I've merged 15 codex suggested PRs so far (most of which required zero iteration) and am working my way through the rest the rest of… https://t.co/kC7ajO07O4

— Sean Moriarity (@sean_moriarity) March 6, 2026

Codex Security will be available as a research preview for ChatGPT Enterprise, Business, Edu, and ChatGPT Pro users and is expected to be available for free in April 2026.

Related Posts: