OpenAI eases reporting standards for law enforcement accounts after ChatGPT missed a shooting warning

Following the Canadian shooting suspect's interactions with ChatGPT, OpenAI has promised Canadian authorities that it will strengthen its security measures, including providing more flexibility in the criteria for contacting law enforcement with accounts and potentially reporting to police if policy violations that could lead to serious crimes are detected.

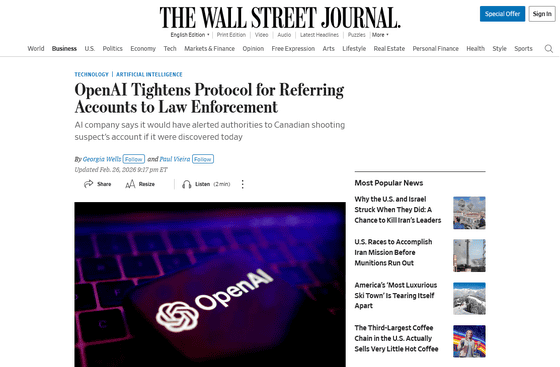

OpenAI Tightens Protocol for Referring Accounts to Law Enforcement - WSJ

In February 2026, a school shooting occurred in British Columbia, Canada. Eight people were killed and dozens were injured, and the suspect, an 18-year-old man, was found dead at the school from what appeared to be a suicide.

The Wall Street Journal reported that the suspect in this case had been threatening gun violence on ChatGPT several months before the incident. According to the report, the suspect's interactions were flagged by an automated review system, and about 12 staff members met to suggest to executives that the suspect be reported to Canadian law enforcement. However, the account was suspended, but the report was not made.

Following the incident, OpenAI stated in a letter to Evan Solomon, Canada's Minister of Artificial Intelligence, 'We will implement more flexible reporting standards for accounts to law enforcement. We will also establish a direct line of communication with Canadian law enforcement to direct users in need to the appropriate resources, and strengthen our detection systems to prevent evasion of safety measures.' Furthermore, the letter stated, 'Based on our enhanced law enforcement engagement protocols, if the suspected shooter's account had been discovered today, we would have reported it to law enforcement.'

'These immediate efforts are just the first step in our work with the Government of Canada to improve AI safety. We are committed to strengthening our detection systems,' said Anne O'Leary, OpenAI's vice president of global policy, in the letter.

At a meeting held in Ottawa after the incident, Solomon stated, 'We are disappointed that OpenAI has failed to present 'substantial new safeguards.'' In response to the letter, Solomon's spokesperson responded, 'We are carefully reviewing OpenAI's letter and will make further announcements in the coming days.' Furthermore, Canada's Minister of Justice, Sean Fraser, has warned that 'unless companies implement policy changes promptly, the federal government is prepared to introduce new regulatory measures to restrict AI.' Attention is focused on how AI companies will respond and how they will work with the government.

Related Posts:

in AI, Posted by log1e_dh