Claude Code tends to build custom solutions, but what are your go-to tools?

Amplifying, a developer of AI evaluation frameworks, has published a research report in which Claude Code systematically analyzed 2,430 tool selections across three models and four project types. The research shows that AI agents are more likely to build their own custom solutions than recommend third-party tools, but also reveals that there are some recommended tools that dominate in certain categories.

What Claude Code Actually Chooses — Amplifying

Claude Code implemented its own custom implementations using framework primitives or environment variables rather than adopting existing libraries in 12 of the 20 categories surveyed, particularly in areas such as feature flags (69%), Python authentication (100%), and observability (22%). The model favored simple implementations that could be completed within the current codebase rather than proposing complex external services.

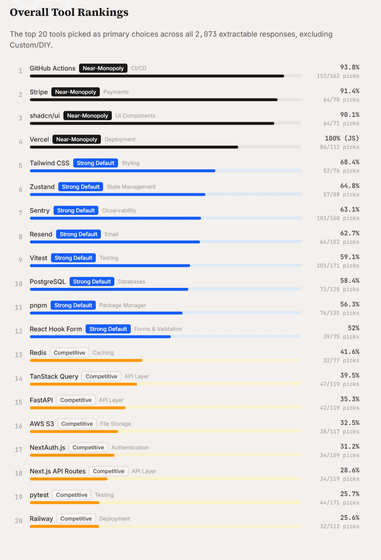

However, certain categories of tools have a near-monopoly, forming the standard stack for AI-driven development. For example, in the CI/CD space, GitHub Actions has an overwhelming 93.8% support, effectively eliminating competitors like GitLab CI and Jenkins as primary recommendations.

Additionally, Stripe is the clear default choice for payments at 91.4% and shadcn/ui is the clear default choice for UI components at 90.1%. There are also clear preferences across ecosystems when it comes to deployment, with Vercel being recommended 100% for JavaScript projects and Railway at 82% for Python projects.

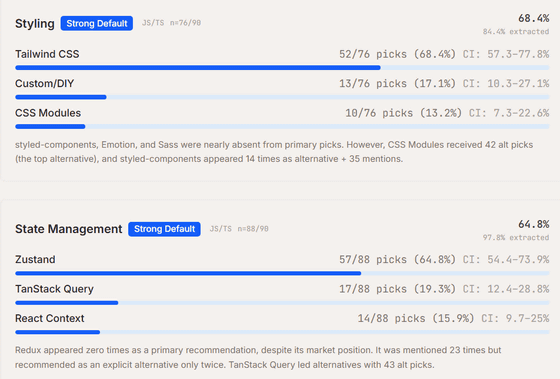

Other key tools that have established themselves as strong defaults include Tailwind CSS for styling (68.4%) and Zustand for state management (64.8%), while Sentry for observability (63.1%) and Resend for email delivery (62.7%) are also chosen by large margins over their alternatives.

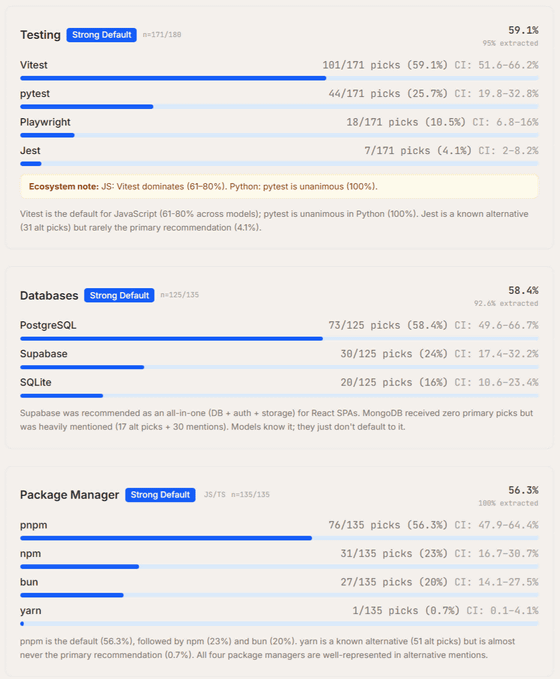

In terms of testing frameworks, Vitest dominates JavaScript with 59.1% of votes, while pytest completely dominates Python with 100%. In terms of databases, PostgreSQL maintains its lead with 58.4%, and in terms of package managers, pnpm (56.3%) is recommended over npm (23%) and bun (20%).

Furthermore, we've observed a phenomenon in which the freshness of the model influences tool selection. In the latest Opus 4.6 model at the time of writing, we've seen a dramatic change: Prisma (79%), which was favored by Sonnet 4.5, suddenly dropped to 0%, and Drizzle (100%) was adopted instead. Similarly, JavaScript job management is also moving from BullMQ to Inngest, and AI agent recommendations are constantly adapting to the latest trends, reflecting the composition of the training data.

Amplifying concludes that Claude Code has become the new gatekeeper for software distribution, making its selection a crucial channel that determines market share. Because the AI agent directly implements the recommended tools into projects, the content of the model's training data will have a greater influence on tool adoption than traditional marketing efforts.

'The strong preference for custom builds, particularly 'make over buy,' seen in many categories, poses a major challenge for tool vendors, who must either make their products primitives for agents to build upon or demonstrate superiority over solutions that AI can quickly create. Furthermore, Amplifying noted that the tendency for newer models to select newer tools creates a feedback loop that generates more training data and accelerates adoption, reshaping the entire development ecosystem.'

Related Posts:

in AI, Posted by log1i_yk