TPU vs GPU: Why Google is positioned to win the AI race in the long run

Generally, GPUs, which excel at parallel computing, are used for machine learning calculations. However, Google, which develops Gemini and other platforms, has developed its own

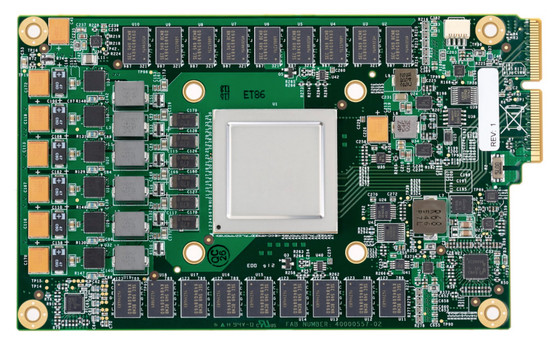

The chip made for the AI inference era – the Google TPU

https://www.uncoveralpha.com/p/the-chip-made-for-the-ai-inference

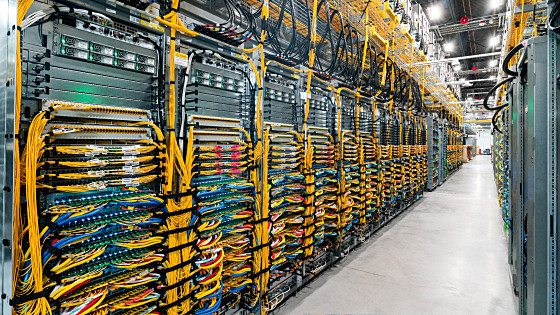

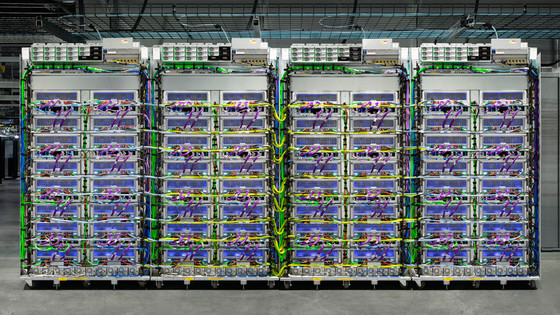

Google's decision to develop its own chips wasn't motivated by a technological breakthrough, but by a sense of crisis over future computing resources. Around 2013, Google estimated that if every Android user used its voice search feature for just three minutes a day, it would need twice as much data center capacity to handle the increased computing power.

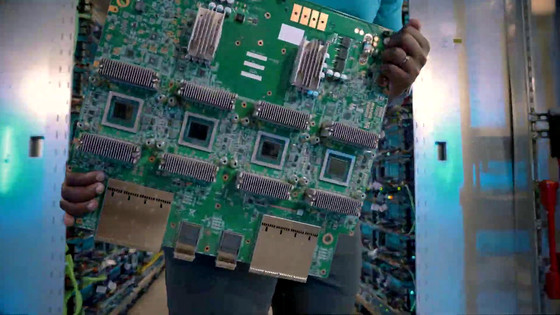

The standard CPUs and GPUs that Google used at the time were inefficient at handling the massive matrix calculations required for deep learning, and scaling up on existing hardware was economically and logistically difficult. Google decided to develop its own ASIC (application-specific integrated circuit) specifically for running neural networks, called 'TensorFlow.'

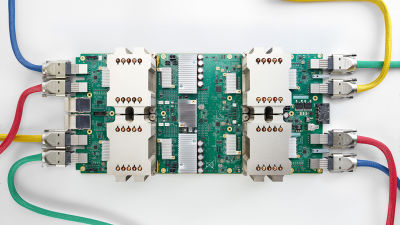

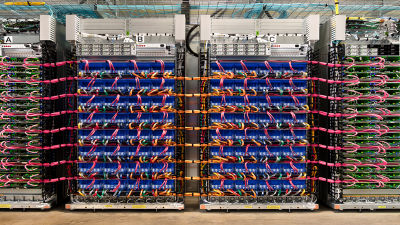

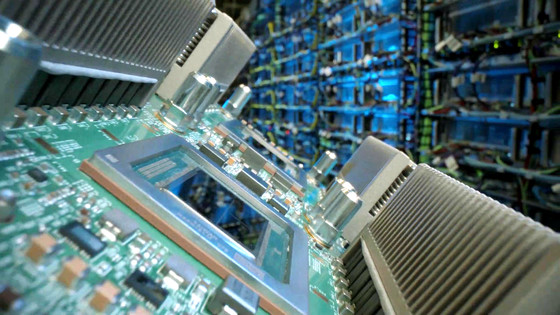

Development of this neural network-specialized ASIC, the TPU, progressed rapidly, and in 2015, just 15 months after design began, it began to be deployed in data centers, and it now powers Google Maps, Google Photos, translation functions, and more.

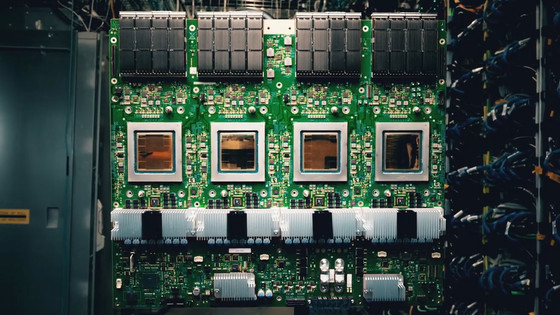

The biggest difference between a GPU and a TPU is whether they are general-purpose or specialized in a specific area. GPUs are designed for graphics processing and excel at parallel processing, but they also have complex functions such as cache management and branch prediction to handle a wide range of tasks, from texture processing in games to scientific simulations.

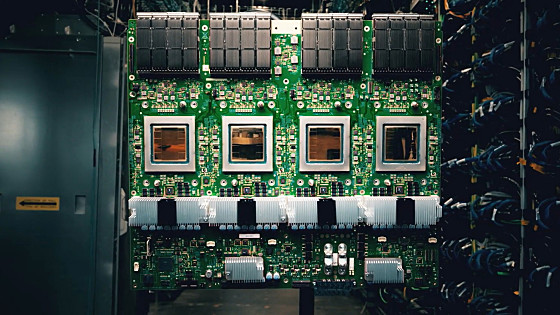

The TPU, on the other hand, eliminates these unnecessary functions and adopts a unique structure called a 'systolic array.' Conventional CPUs and GPUs require data to be exchanged between memory and the arithmetic unit for each calculation, creating a bottleneck in processing speed. However, the TPU's systolic array allows data to flow unidirectionally within the chip, much like the heart pumping blood. Once data (weights) is loaded, it is processed one after the other without being written back to memory, dramatically reducing the number of memory accesses and allowing processing power to be concentrated on the calculation itself.

Although information about the TPUv7, codenamed '

For certain applications, TPUs are said to be more cost-effective and power-efficient than NVIDIA GPUs. According to Jaak, a former Google employee stated that 'for the right application, TPUs are up to 1.4 times more cost-effective than GPUs,' and they also consume less energy and generate less heat. Additionally, Google lowers the cost of older TPUs as new generations of TPUs are released, offering the added benefit of lower costs for users who don't require cutting-edge speeds.

The biggest barrier to widespread adoption of TPUs in the general enterprise lies in the software ecosystem: While many AI engineers study NVIDIA's CUDA platform at university, which has become the industry standard, TPUs primarily use different languages and libraries, such as JAX and TensorFlow.

Additionally, many companies use multiple cloud services and tend to avoid depending on a specific cloud. NVIDIA GPUs are available on AWS, Azure, and Google Cloud, but TPUs are only available on Google Cloud. If companies become completely dependent on TPUs, there is a concern that the cost and effort of migrating to another cloud provider could become enormous if Google raises prices in the future.

In the age of AI, the profit margins of the cloud business are being squeezed by the high cost of NVIDIA chips, but having our own chips is a solution. By developing and operating our own ASICs, we can save the high profit margins we pay to NVIDIA and regain our former high profit margins.

Google is the most mature in this field, controlling everything from chip design to software optimization in-house. Currently, Google's latest model, Gemini 3, is trained using a TPU, and TPUs are used for almost all of the company's internal AI inference workloads. Jaak predicted that this strategy of providing familiar NVIDIA GPUs to external customers while fully adopting cost-effective TPUs for its own service infrastructure will be the biggest competitive advantage for Google's cloud business over the next 10 years.

In addition to the message 'We are pleased with Google's success,' NVIDIA also emphasized NVIDIA's superiority by saying, 'Google also uses NVIDIA's GPUs for development, and GPUs are superior to TPUs in versatility.'

We're delighted by Google's success — they've made great advances in AI and we continue to supply to Google.

— NVIDIA Newsroom (@nvidianewsroom) November 25, 2025

NVIDIA is a generation ahead of the industry — it's the only platform that runs every AI model and does it everywhere computing is done.

NVIDIA offers greater…

Related Posts: